Printed in the United States of America.

Copyright © 2010, 2012 Alec Rogers

2012-04-27

| Revision History | |

|---|---|

| Revision 1.0 | April 2012 |

| First Edition. | |

This book is dedicated to all the people with whom I ever ate lunch, many of whom were forced to discuss the nature of existence of the salt and pepper shakers.

Also, I would like to thank the salt and pepper shakers: I never doubted you for an instant.

Table of Contents

List of Figures

- 2.1. Nominal Dimensions

- 2.2. Ordinal Dimensions

- 2.3. Interval Dimensions

- 2.4. Separate Hierarchies

- 2.5. Venn Diagram

- 2.6. Combined Hierarchies

- 2.7. A Meronomy

- 2.8. A Taxonomy

- 2.9. A Discontiguous Meronomy

- 2.10. An Atom: Zero Dimensions

- 2.11. A Line: One Dimension

- 2.12. A Plane: Two Dimensions

- 2.13. A Space: Three Dimensions

- 2.14. A Timeline: Four Dimensions

- 2.15. Multiple Timelines: Five Dimensions

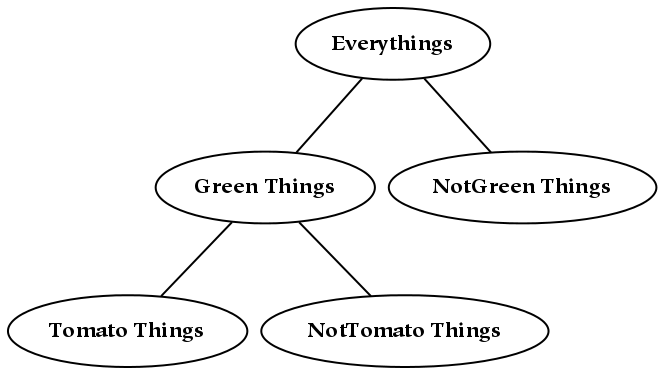

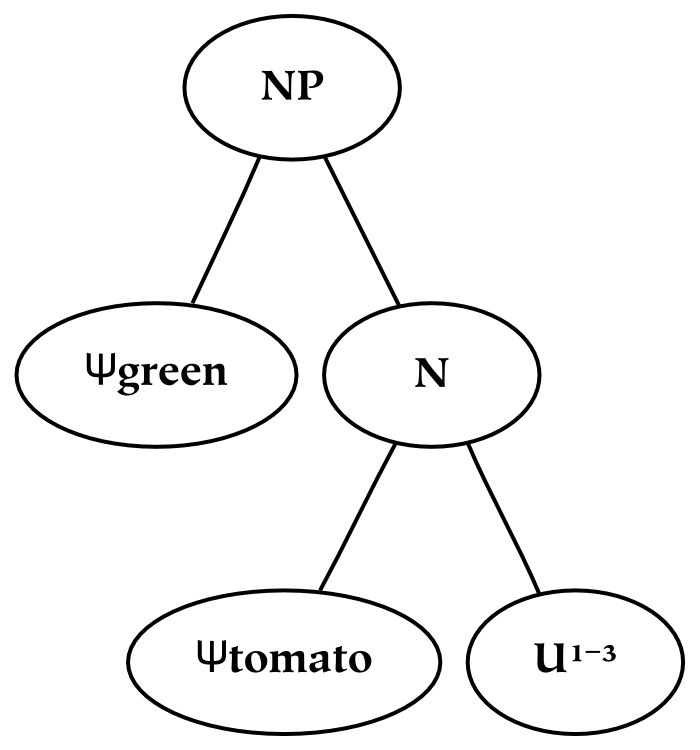

- 3.1. Green Tomatoes

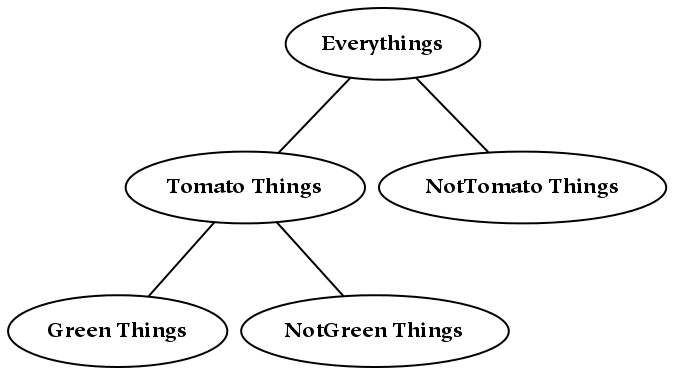

- 3.2. Tomatoes, Green

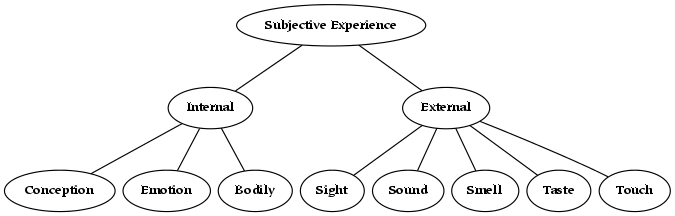

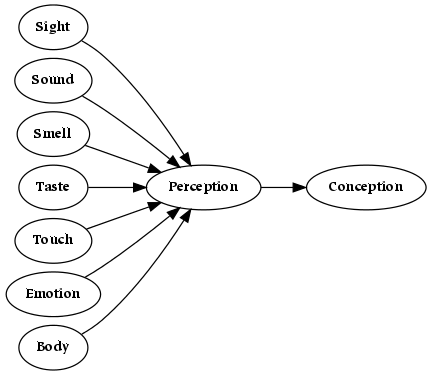

- 5.1. Senses

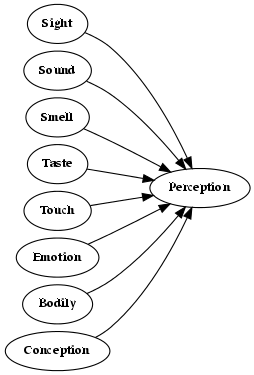

- 5.2. Western Sense Model

- 5.3. Eastern Sense Model

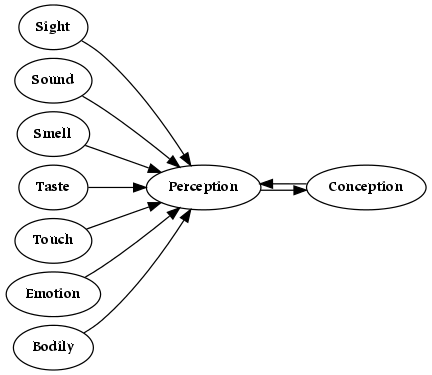

- 5.4. Combined Model of Sensation

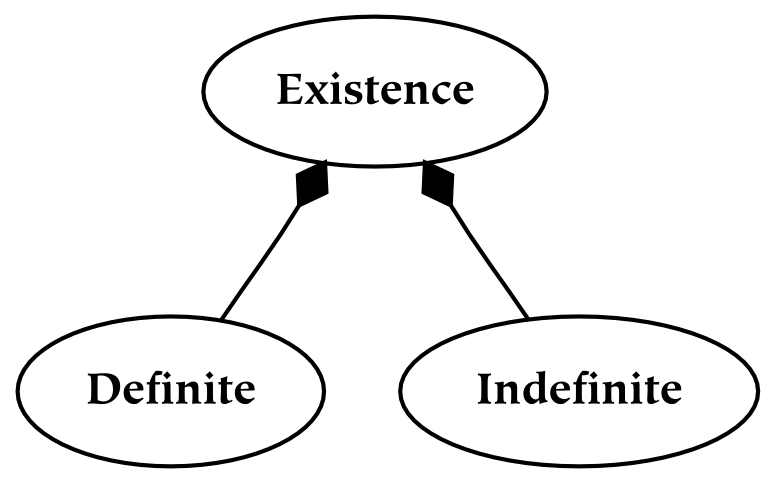

- 6.1. The the

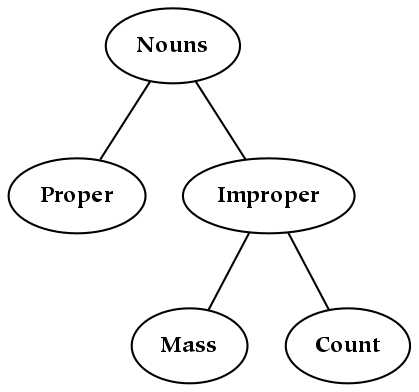

- 6.2. A Taxonomy of Several Types of Nouns

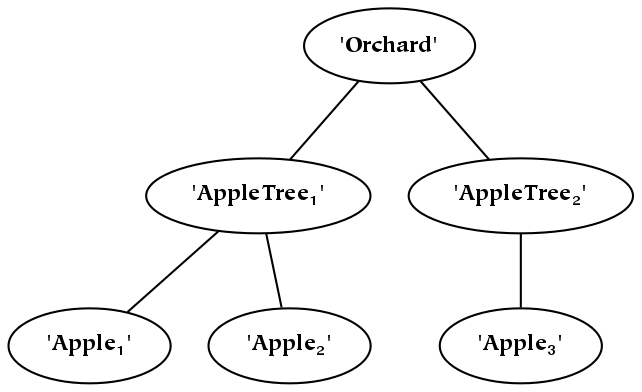

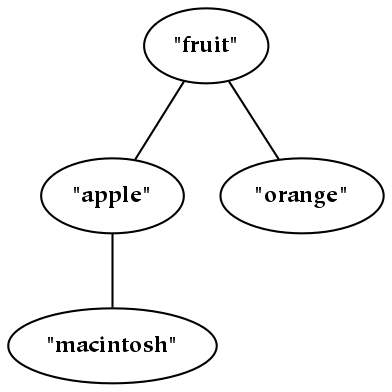

- 6.3. Apple Percepts

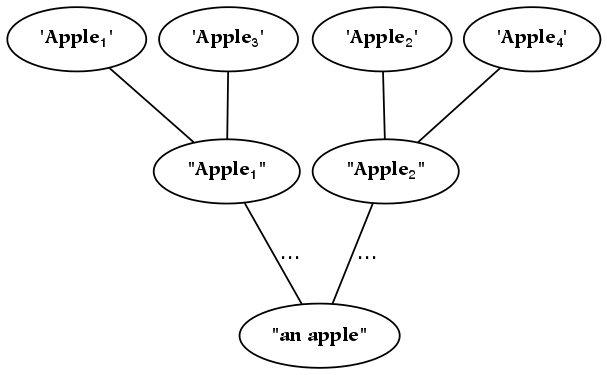

- 6.4. Apple Concepts

- 7.1. Blind Spots

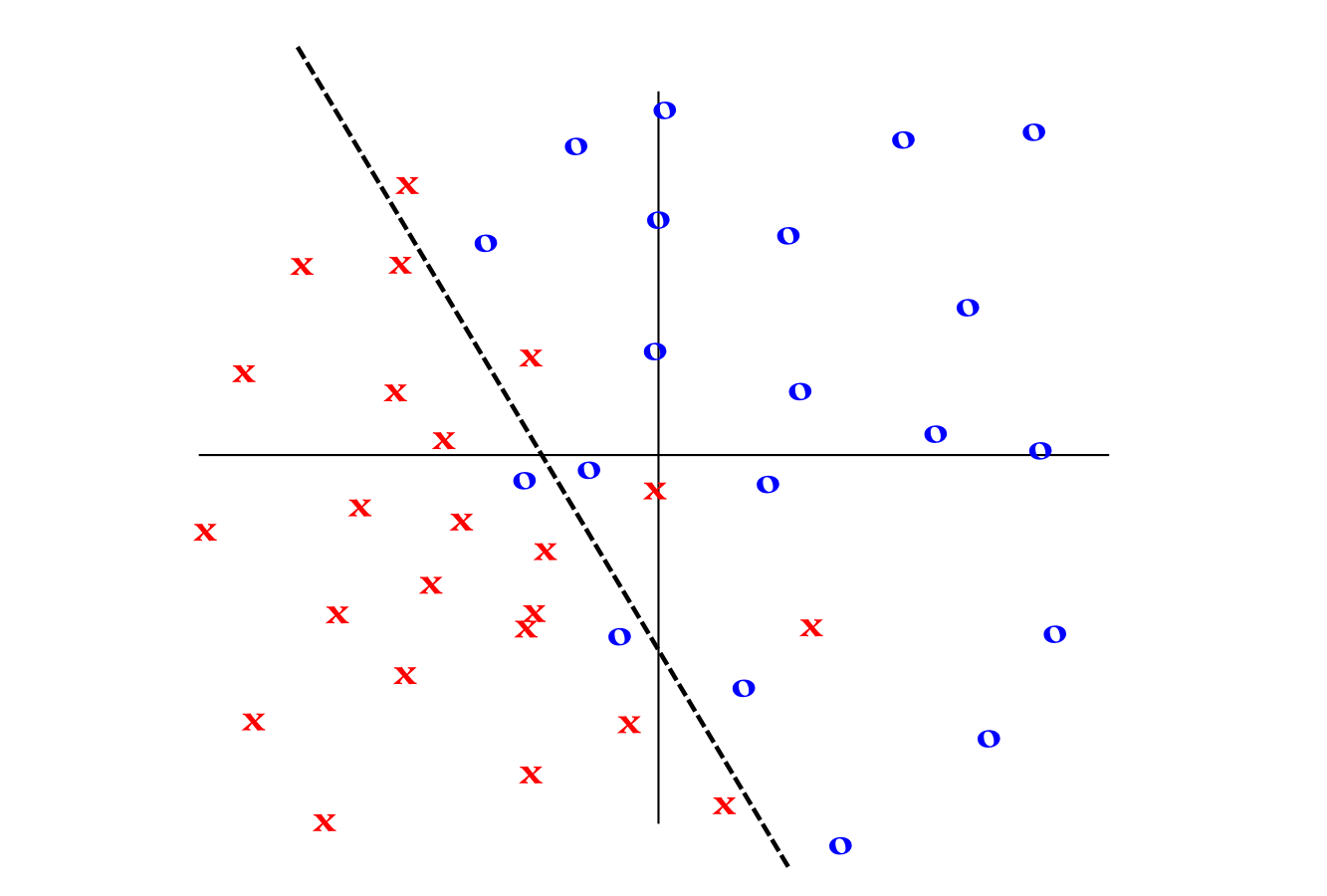

- 8.1. A Decision Boundary

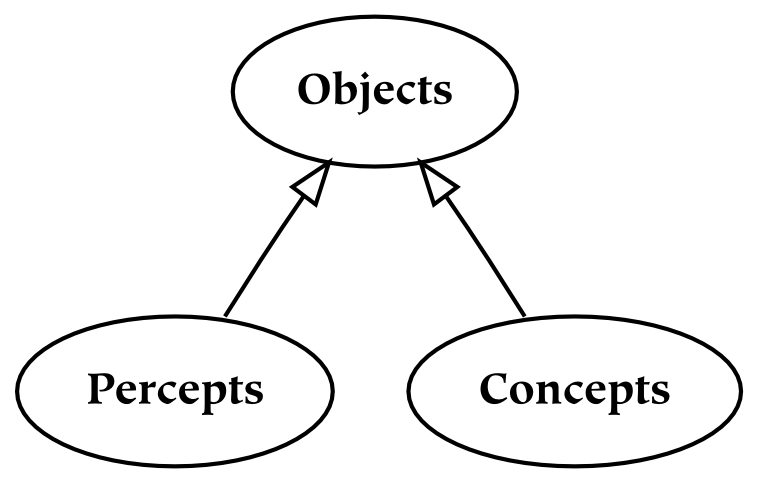

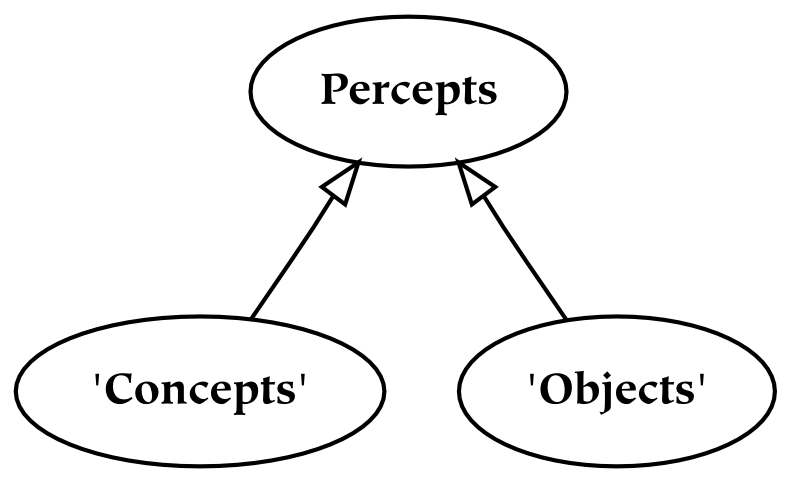

- 11.1. The Cognitive Structure of Nouns

- B.1. Things

- B.2. Relations

- B.3. Parts

- B.4. References

- B.5. The References Between the Universes

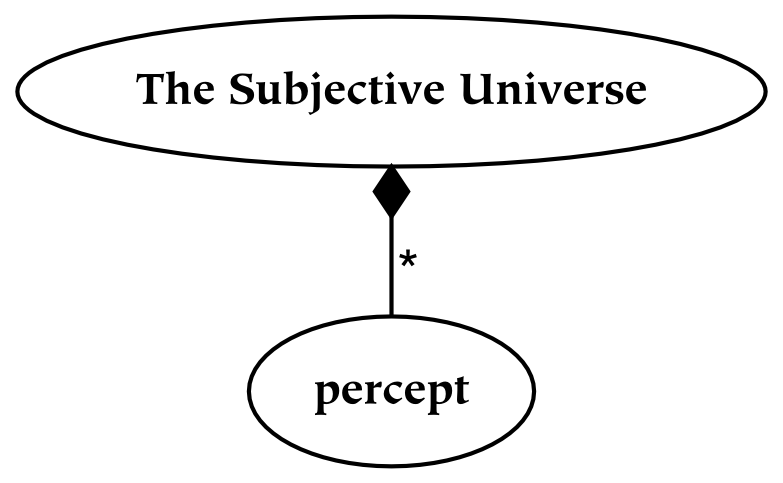

- B.6. The Meronomy Depicting the Universes

- B.7. The Universes

List of Tables

List of Equations

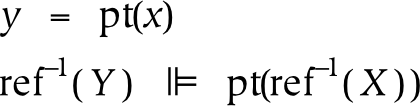

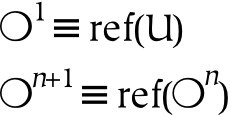

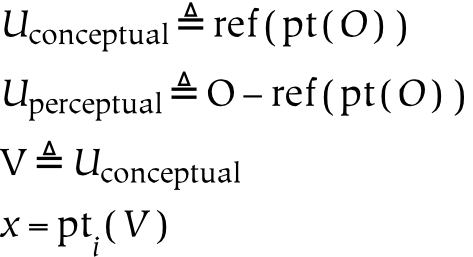

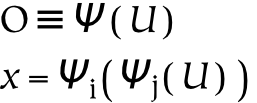

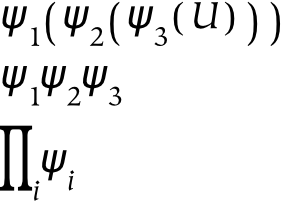

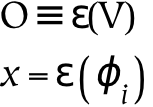

- 11.1. Universe

- 11.2. Reference

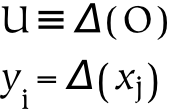

- 11.3. Parts

- 11.4. Negation

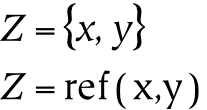

- 11.5. Set

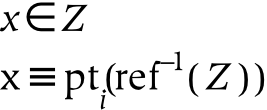

- 11.6. Element

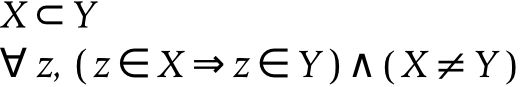

- 11.7. Subset

- 11.8. Atoms

- 11.9. Universes

- 11.10. The Empty Set (Nothing)

- 11.11. The Full Set (Everything)

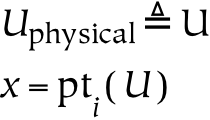

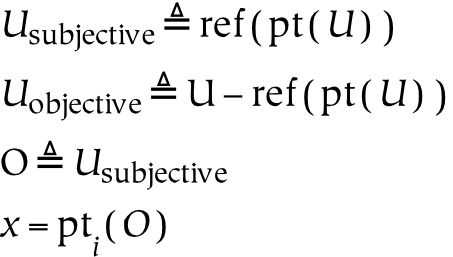

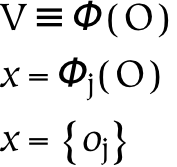

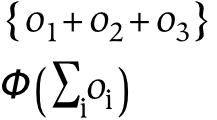

- 11.12. Physical Universe

- 11.13. Subjective Universe

- 11.14. Conceptual Universe

- 11.15. Perception

- 11.16. Dichotomy

- 11.17. Conception

- 11.18. Collection

- 11.19. Naming

- 11.20. Communication

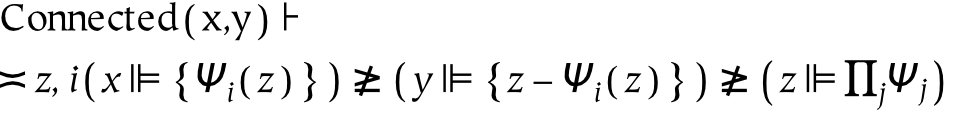

- 11.21. Connection

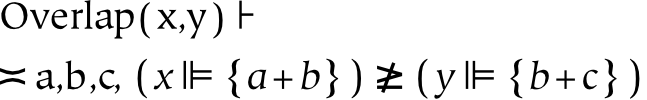

- 11.22. Overlap

- 11.23. Conceptual Order

- 11.24. Dimensionality

- 11.25. Hierarchy

- 11.26. Identity

- 11.27. Intrinsic Identity

- 11.28. Extrinsic Identity

- 11.29. Referential Identity

- 11.30. Isomorphic Identity

- 11.31. Classical Logic

- 11.32. Existential Quantifiers

- 11.33. Properties

- 11.34. Nouns as Applied Adjectives

- 11.35. Statements Expressing Relations

- 11.36. Statements Expressing Things

What is this thing?

This book is a study of unity, multiplicity, and references. It examines the world, our experience of it, and our thought about it, while focusing on the relation of the part to the whole. It is about our concepts: how they are formed, how they are shaped by the world, and how they in turn shape the world. It is mildly ironic to examine reality by first taking it apart and then putting it back together: perhaps that is my karma as an engineer.

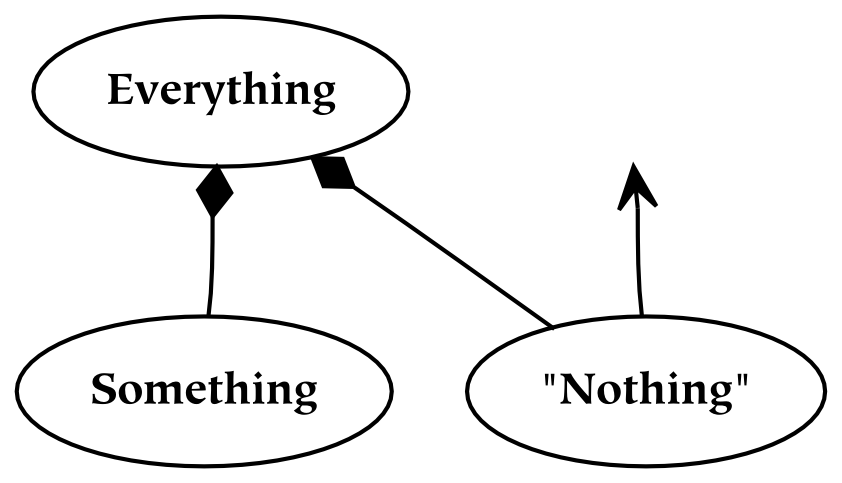

This book is also a study of three general types of things: everything, something, and nothing. These things are examined from three points of view: the physical, the subjective, and the conceptual. One of the main goals of this book is to develop a somewhat formal language for cognition: to do so, it relies heavily on the sciences of set theory and mereology.

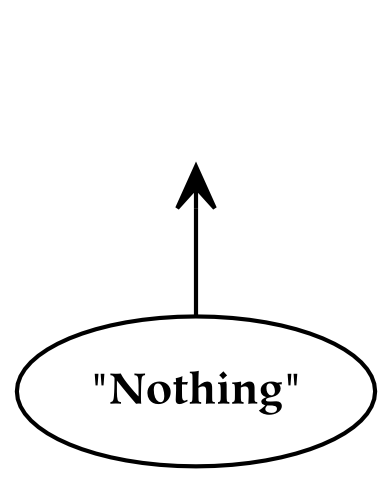

This book takes primarily a holistic, or nondualistic, perspective: in other words, it begins by examining everything . It then proceeds to examine something , which is formed by dividing everything . This division is often carried out hierarchically: parts themselves are subdivided, thereby forming a structure that resembles a tree. This nondualistic orientation holds that the whole comes before (or is ontologically prior to) its parts. Last but not least, nothing is discussed, in no small part because it nicely complements the discussion of everything. Nothing is also significant in virtue of being a reference: references are those things which allow us to build concepts out of smaller constituents, and whose manipulation is called thought.

Why did I write this thing? I wrote this book in order to share several simple ideas. These ideas pertain to the relations between parts, wholes, and references, which are familiar subjects to all of us. But despite their frequent use, these subjects receive relatively little attention. This book attempts to remedy that situation: it strives to lay a broad foundation for thinking about parts, wholes, and references from a number of different points of view.[1]

Why should you read this thing? Perhaps you have some interest in the organization and operational principles of our material and mental lives. Perhaps you have an affinity for a “holistic” or “nondualistic” approach, and you would like to understand more about the relationship of parts to wholes and how that influences, and is influenced by, cognition. You might also be interested in understanding the parallels between set theory and cognition.

Although the subject matter of this book is wide-ranging, most of it is related to parts, wholes, references as these things relate to the structures behind language and cognition. The title is indicative of this subject matter: cognition (psychology) and set theory (mathematics) are interwoven. Since the technical details of this endeavor are of interest only to a small portion of readers, the first several parts of this book are relatively informal; the formal details are presented in the last part of this book.

What is the structure of this thing? This book is written in four parts.

The first part of this book is an introduction to things . Things are split into three general types: everythings, somethings, and nothings. This three-part division of things is based on the space which things occupy; a thing is defined in terms of its spatial boundaries.

Space, in the sense that term is used in this book, is not limited to a single physical (three-dimensional) space: it may be multidimensional (i.e. one which may have an arbitrary number of dimensions, and which is sometimes called N-space) or even a conceptual space. The dimensionality of the space is assumed to be equivalent to the objects in it: for example, four-dimensional space is necessary to contain four-dimensional things. As an example, a four-dimensional thing could be a three-dimensional thing that occupies a temporal extent (i.e. a single, continuing three-dimensional thing may be considered to be a four-dimensional thing).[2]

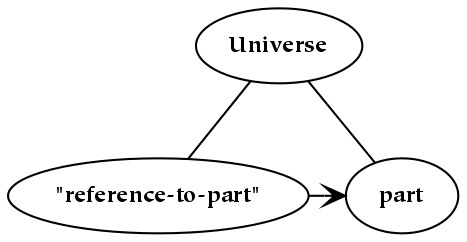

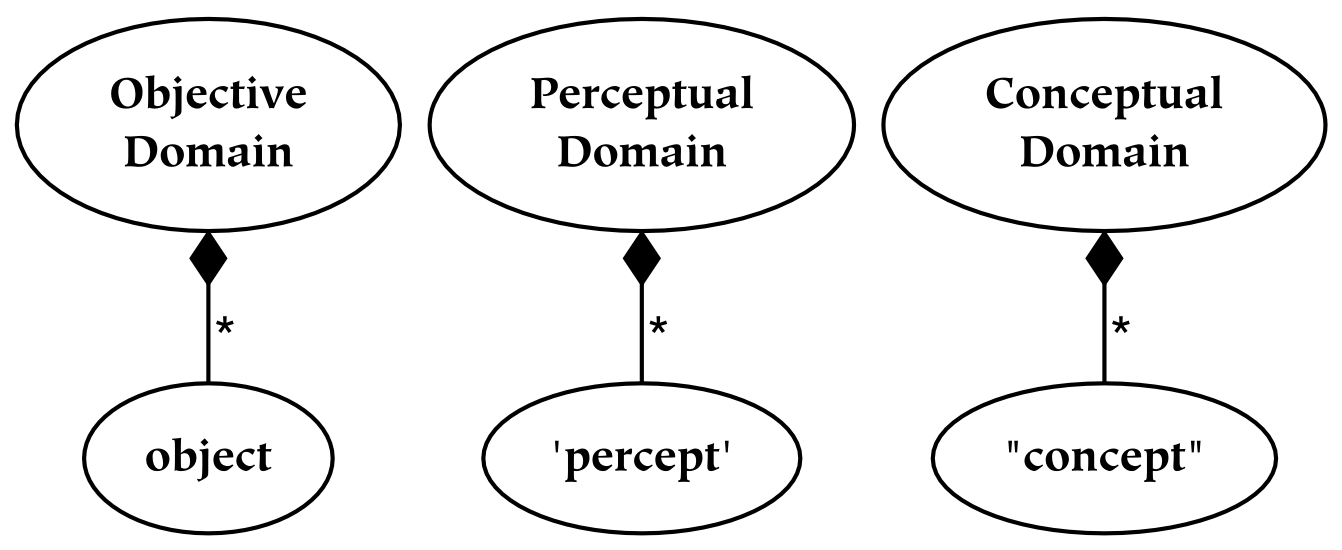

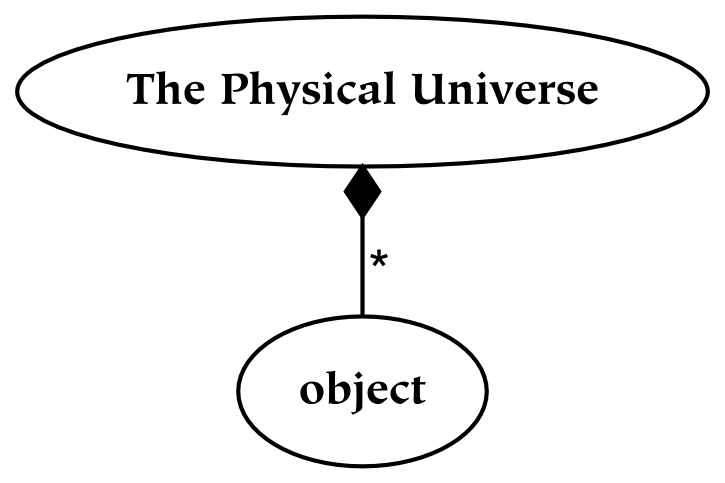

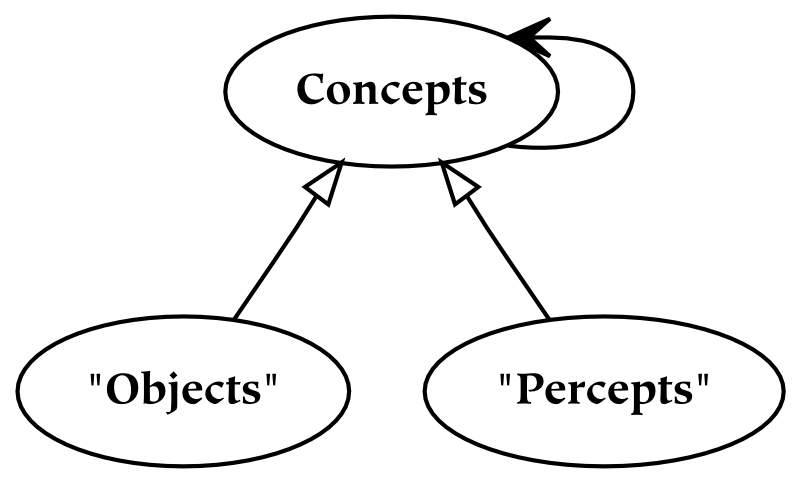

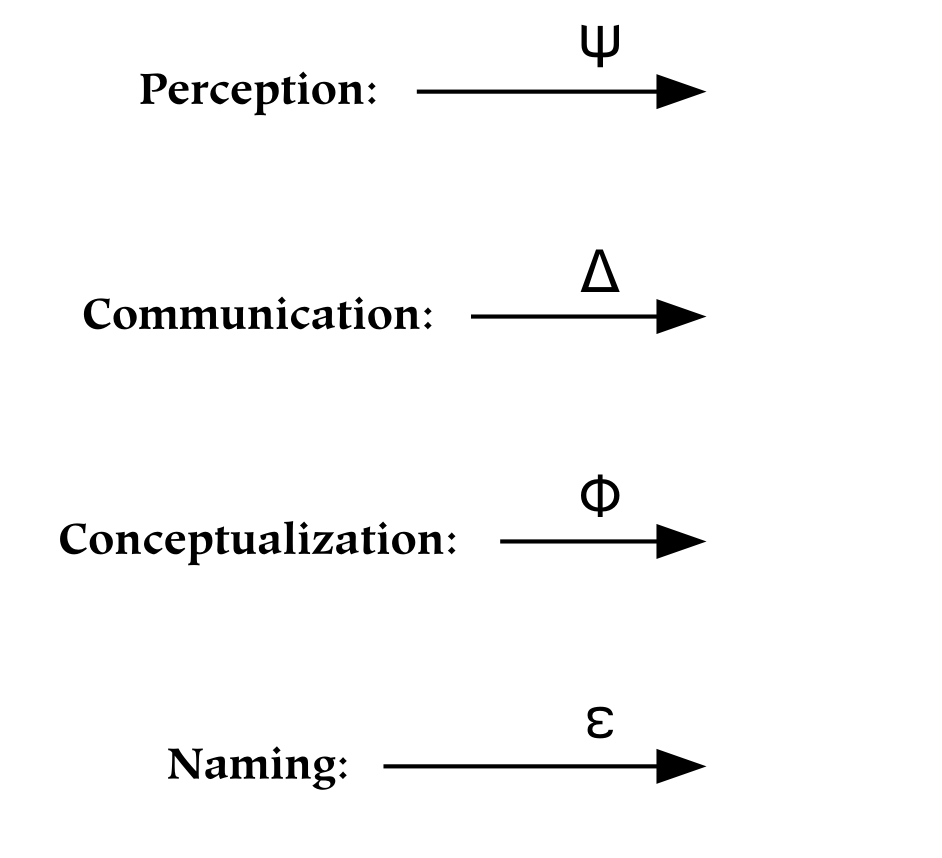

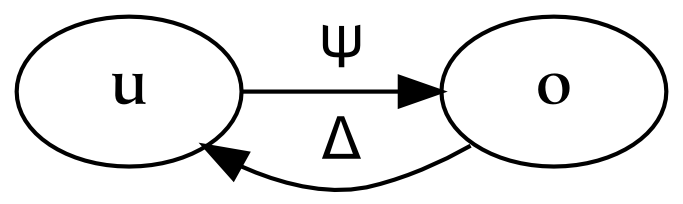

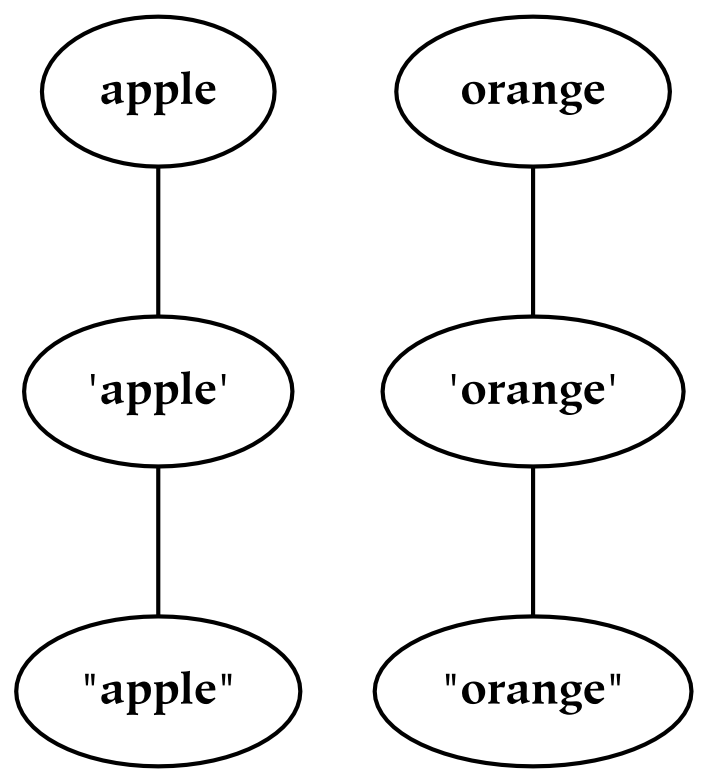

The second and third parts of this book discuss universes and several primary relations between these universes, respectively. The universes are created by successively partitioning the physical universe. First, the physical universe is divided into two parts, the subjective and the objective. The subjective part is further divided into perceptual and conceptual parts. In this way, three things are created, which are called universes. The reason for partitioning everything in this way, as opposed to some other, is that the resulting parts are composed of references : the conceptual universe refers to the subjective universe, which in turn refers to the physical universe.

References form the basis of universes: the division between one universe and another similarly divides the referrers from the referents. For example, the subjective universe contains references to the objective universe. From the subjective point of view, these references are responsible for perception. From the objective point of view, references are physical things just like any other. This dual characteristic of references is what makes them so special, and what makes the boundaries between universes composed of references so odd.

The fourth and final part of this book is aimed at technically-oriented readers: it offers a more formal summary of most topics discussed in the book.[3] Finally, the various appendices should be treated as reference material for the rest of the book: it is advisable to at least skim that section first.

[1] I have a passion for mathematics, philosophy, and psychology, which I anticipate many readers will share. Despite my passion, I sometimes find the presentation of these subjects somewhat impenetrable. Therefore, a primary aim of this book is to make mathematics relevant, philosophy unconvoluted, and psychology beautiful.

[2] For some readers, it may clarify things to think in terms of “events” and “spacetime” instead of in terms of “things” and “space” , since the former terms connote having more than three dimensions. However, the former terms imply four-dimensional physical entities: in the general case, being limited to either four dimensions or the physical world is undesirable (since this might exclude such things as perceptual spaces).

[3] The summary of the book does not contain a summary of the entire book, but only a summary of the parts of the book that have been written before the summary.

In a general sense, there are three types of objects: everythings, somethings, and nothings. In a universe, there can exist only one everything, many somethings, and exactly zero nothings.

Table of Contents

Everything means every thing , taken together. Although it may be conceptualized as a single unit, it is best to regard everything as something which is neither singular nor plural (because the concept of singularity requires the concept of plurality).

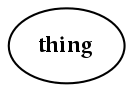

We have depicted everything in the diagram above. We have not bothered to label it, because there is nothing from which to distinguish it. We have drawn a boundary around it to help to visualize it, but this is somewhat mistaken since everything is unbounded: the boundary is not present if everything is recognized.

Everything cannot be defined.

Everything cannot be defined. It is impossible to say what it is, and it is impossible to say what it is not. Everything is a whole which initially is without parts and without the lack of parts. Despite the fact that it serves as the starting point, it is that thing which (at least from one point of view) cannot be transcended.

Everything occupies every position in all dimensions which are attributed to it.

It is difficult to define everything, since there is nothing to which it can be compared (other than itself). Definitions are always given in terms of other things, so it is impossible to define a thing for which there is no other thing. There is no other when it comes to “the one without a second” .

This book begins with everything, which seems appropriate given that our mental worlds begin in the same way. Conceptually, it is difficult to understand everything. Everything should not be understood as many things taken together or as a single whole ; both of these concepts are limiting, and they cannot be applied to everything without thereby restricting its scope to some portion of itself. Everything should not be understood as “every object at a single instant in time” , but as everything-everywhere and everything-everywhen. In this sense everything can be considered an event, because it has both a spatial and a temporal extent.[4] Again, the extension of the word “everything” in the world (i.e. the object that the word refers to) has no thing outside of it. If one believes in multiple universes, then these multiple universes should also be included in the concept of everything.

Although it is somewhat mistaken to make a characteristic (or attributive) statement about everything, it may help to consider everything as “undifferentiated” to counter previous misconceptions. For example, this might help to correct the points of view that everything is either a compound entity (one which is made up of parts), or a simple entity (which consists of only one part). However, knowing what it is to be undifferentiated requires knowing what it is to be differentiated; since “everything” is the first concept that we wish to introduce, there is no differentiated thing with which to contrast it. From a subjective point of view, everything exists prior to the properties used to categorize it: because these properties serve to discriminate one thing from another, it is impossible to define any properties on the basis of only one thing.

In both the case where everything is considered as a single entity and where it is considered to be multiple entities, the thing or things referred to are the same in that they have the same spatiotemporal extent. In this case, it is the decomposition (or composition) of everything that is different. From a nominalist point of view, it is the consideration of everything that makes it one thing or another: everything remains undifferentiated, independent of our consideration of it. [5]

The fact that everything might be considered to be both one thing and many things highlights two different notions of identity: one which is evaluated spatially, and one which is evaluated in language (between references). Things which occupy the same space (and the same time) are identical, so from one point of view, there is no difference between one thing and many things (as long as they occupy the same space). From another point of view, two things are not the same as one thing in that the symbols which reference those things are not identical. For example, “an apple” is materially (spatially) equivalent to its “seeds, skin, stem and fruit” , although these things have different criteria for identity on a conceptual (descriptive) level. The “everything” being described here is that which is materially equivalent to all of its parts (no matter how, or even if, it is decomposed).

Everything is ineffable. Even the morphological parts of the term “everything” indicate that to describe everything, it is broken into parts ( “things” ) and then collected together again ( “every” ). Spatial metaphors are used to describe it, although this may be overly restrictive, since most spatial metaphors are typically limited to three dimensions. A more nominalistic stance would hold that everything has as many dimensions as are necessary for a given description. More precisely, for every dimension which space possesses, everything occupies every part of that dimension. This description covers the rather interesting case in which the universe itself does not have a particular dimensionality in isolation from the language used to describe it. [6]

Everything neither has properties nor has no properties.

Everything cannot be called large, but neither can it be called small. Likewise, everything is neither singular nor plural. It is not the case that everything has properties.

However, neither is it the case that everything has no properties. The combination of these statements is somewhat of a puzzle, since most things either have a property or do not have that property. However, if the nature of words is relativistic, then there is no sense to be made of something which has no comparator; and that is exactly how everything is defined. Everything cannot be compared to something, since there is no something other than everything: to compare something to itself is tautologous. Conceptually, since no relative judgment is possible, no judgment whatsoever is possible: conceptual judgment is inherently relative.

Perhaps calling a thing both good and bad is an attempt to make the ineffable, effable; perhaps the best description of the ineffable characterizes it with every possible term, as well as the opposite of that term (e.g. everything is both good and bad). A complementary attempt to describe everything utilizes the negation of both terms (e.g. everything is neither good nor bad). While it is incorrect to say anything about everything because of the potential for misunderstanding, this book follows the latter convention: with respect to a property Px, “everything is not Px ” and “everything is not not-Px ” (this implies that “not Px ” is what is called a non-affirming negative).

It is important to note that in saying that there is no absolute conceptual goodness, we are not advocating any sort of moral relativism. There is certainly such a thing as doing good, but it entails a particular perspective: for example, doing good often implies doing good for other people. One might hold certain things to be good in themselves, but there must be bad things to which they are compared. Further, those good things are only good in a particular context. For example, although there may be a sense in which everything is good at a particular time, that is probably relative to a previous time when things were not so good.

Some people might disagree that definitions are inherently relative; they may hold that “good” is an objective characteristic. In other words, an object's goodness does not require comparison with some other object, so this goodness is not relative to something else. Non-relative (objective) goodness, however, is full of contradiction: it is impossible to know what good means anymore if there is no longer any bad. Further, if the concept of “good” is not relative to “bad” (i.e. if the two do not lie on opposite ends of a single continuum), then a single thing could be both good and bad.[7]

Applying a relative term to a wholeness, or something which is not itself a part of something else, poses a paradox. For example, in the introduction to a radio show A Prairie Home Companion, it is said of a town called Lake Woebegone that “... all the children are above average.” Although this is a pleasing image, it is not logically possible. Being above average is clearly relativistic, so not everybody can be above average; in order for someone to be above average, someone else must be below average. Finding a comparator in this case is not a problem: the people of Lake Woebegone are smarter than the people of Shelbyville. For everyone to be above average, however, there is a problem: if the population referred to by “all the children” is the same population from which the average is calculated, somebody must have a below-average child (apologies to the relevant mommies and daddies).

There is a mismatch between the thing everything and the terms used to describe it: everything is absolute, but terminology is relativistic. Conventionally, one might say that everything has this or that property, but this entails comparing one concept of the world with another, and this latter comparison says more about concepts then it does about the world.

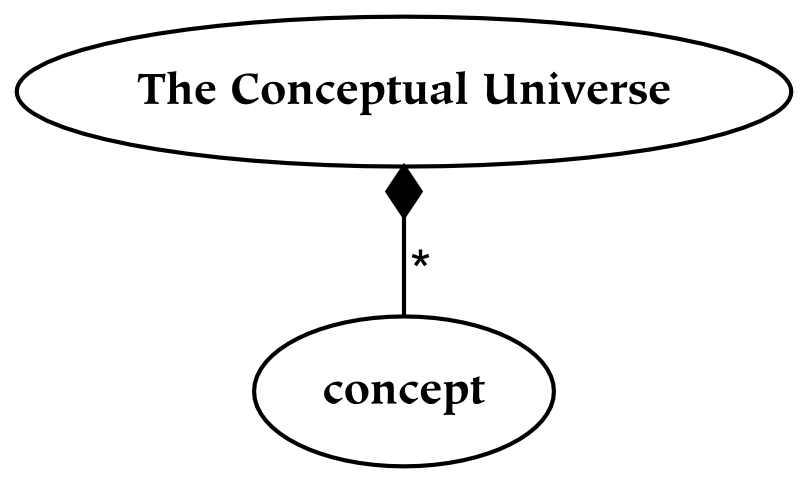

Universes are everything from a particular point of view.

This book describes several universes. Since the term universe is generally construed to be all-inclusive, it may seem counterintuitive to have more than one of them. On the one hand, the physical universe contains all of the other universes as parts. When referring to this all-inclusive universe, it is known as the universe. However, there are other entities which are in some sense unbounded, in light of which they will also be called universes. It is of course odd to have multiple unbounded entities, especially if some are parts of another, so the existence of multiple unbounded entities must be further elaborated.

To reiterate, the universe is that which contains absolutely everything: in this absolute sense, there can clearly be only one universe (which is why the definite article is emphasized in this context). However, the universe as seen from a particular reference point is also a universe: it is a universe from the subjective point of view (or point of reference). These subjective universes, based on particular points of view, are composed of references to the containing universe.

Subjective universes exist within the universe, as well as being universes in their own right. Just as a reference is itself a thing and a reference to a thing, so a universe which consists of references is both a referential universe and contained in the universe (to which its references refer). To use a more concrete example: spoken words may stand for something else, but they are also themselves sounds. So the universe of spoken words is both contained in the universe of sounds, and it is a universe of its own (when the words are understood as references). When a given referential universe is viewed in relationship to the universe, it is seen to be a part of it: in that larger context (or from that larger perspective), these referential universes are merely parts. On the other hand, when they are viewed from their own perspective, they operate as the entire universe, in the sense that nothing exists outside of them from that perspective .

To borrow an example from a later section of this book, the subjective universe is everything that an individual can perceive. Everything, from a subjective point of view, is the entire field of perception. Although the subjective universe can be restricted by attention, which limits what is perceived (or conceived), a given perceiver will never perceive outside of their perceptual universe. In this sense, it is complete: it is a whole, or a totality. From the subjective perspective, references to physical things are everything that exists: therefore, subjective experience forms a universe. Similarly, although concepts may be restricted to some domain of discourse , concepts also form a universe. The conceptual mind lives in a universe of concepts, in that nothing can be conceived which is not a concept.

To use a more concrete example, our house may be a part of the world which we enter and leave, but if we never leave it, it is our universe. People may come and visit, and tell us of the world outside, and we may form an idea of the world outside, but we still form an idea of the world outside from within our house . There is nothing inside of our house which is outside of our house. Universes are like this; it is possible to have references to things outside of a universe from inside of a universe, and many things can be accommodated (referentially) within that universe. From the referential perspective, the set of references is complete, unbounded, and whole. However, from the perspective of the larger container, references are categorically different than the things they point to, and these universes are incomplete, bounded, and merely parts.

To return to the subject of the (physical) universe, it is defined to contain all of the other universes. This containment relationship between the physical and other universes is often taken for granted, but it is not the only possibility. For example, we might believe that the subjective universe contains the physical universe. In other words, our only knowledge of the external world comes through experience with the subjective world, so it is not possible to confirm that there is an objective world independent of the subjective world.[8]

Wholes, as opposed to collections of parts, are united.

What is it to be a whole? For a thing to be a whole means that it is united: a single thing. Although it may be composed of other things, and it may in turn compose other things, there is some integral quality to it.

For a thing to be an integrated whole also seems to imply that there is something else with which it can be differentiated. Wholeness is the result of a boundary: it makes everything inside the boundary the same thing, and everything outside a different thing. Everything is not like this, in that it does not have an inside and an outside (these are qualities only of something ).

Wholes do not generally overlap one another, although things can be at least partially coextensive with other things (i.e. they can occupy the same space). For example, this coextensiveness is allowed when the material is the same: the top 2/3 of my body is partially coextensive with the bottom 2/3 of my body. In this case, it is not the things that overlap, but the references to them. Overlap is generally not allowed when the material constituting the things is not the same. In fact, it is not even clear what it would mean for different material to occupy the same space, unless one of the things is tangible and the other is some sort of an ethereal, ghost-like thing.[9]

Wholes are said to be greater than the sum of their parts, which is a bit of a puzzling notion. There is at least one way in which it is true, and one in which it is false. On one hand, a whole is not greater than the sum of its parts if we understand identity materially: the material that composes a whole is exactly the material that composes its parts. For example, the material that constitutes the wheels, body, and the rest of a car is equivalent to the material that constitutes the entire car. On the other hand, a whole is greater than the sum of its parts if we consider properties such as the relations between parts to be properties of the whole, and not properties of the parts themselves (e.g. the spatial arrangement of parts). For example, the wheels, body, and the rest of a car are not sufficient to carry you about if they are lying in a heap.

A further claim about what makes wholes more than just the sum of their parts has to do with emergent properties . Emergent properties are said to emerge only when considering the whole, and are not properties of the parts of that whole. An example of this claim is that simple neuronal elements connected in varying ways leads to a brain: a whole which has properties that are not properties of the individual elements. Whether or not these properties could have been predicted based on knowledge of the smaller parts is the subject of some debate. In either case, the behavior of the whole can certainly be quite difficult to predict based on knowledge of the individual elements.[10]

In summary, there is something cohesive about things. Physical things tend to be cohesive in that three-dimensional objects often maintain their (approximate) shape. By contrast, the labels which we apply to these things are even more cohesive: objects are changing all the time, but their names are not.[11] Although using the same word for slightly different objects leads to an economy of expression, it is prudent to ensure that this categorical understanding does not reduce our relationship with reality to one which is exclusively categorical.

[4] Note that the use of the word spatial in this context denotes the three-dimensional (classical) notion of space. More often, the use of the term space in this book should be understood in a mathematical sense, where it may have an arbitrary number of dimensions. In this latter sense, it is often called N-space, where N is the number of dimensions.

[5] The fact that everything is undifferentiated, but our conception of it is differentiated, is somewhat odd since all things (conception included) constitute parts of this everything.

[6] This description is nominalistic in the sense that is not as much of a characteristic statement about everything as it is a conditional statement: there is no commitment to the fact that everything must have (a particular) dimensionality.

[7] It is conceptually meaningless for a thing to be both good and bad at the same time, from the same perspective, using the same criteria for assigning the terms.

[8] The discussion of the way that different universes relate to one another is analogous to the philosophical debate about monism and dualism. This discussion focuses on the relationship between the world of ideas (or perhaps the world of the spirit) and the world of matter. Roughly, dualists believe that both matter and spirit exist, and that they are different; monists believe in the ultimate existence of either only ideas or only matter (these two subgroups are called idealists and materialists, respectively).

[9] We assume that if things do not occupy the same space, then they do not occupy the same space at a small physical scale, either (which rules out mixtures of things).

[10] For a popular example, visit the boids link at http://www.cognitivesettheory.com/links

[11] Thank goodness. I have a problem even with names that are not changing all of the time.

Table of Contents

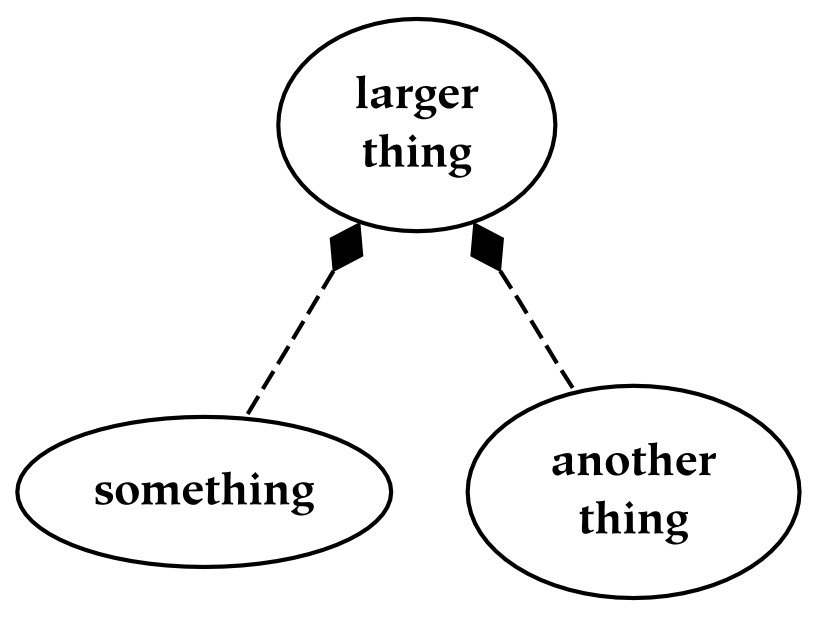

Something is the result of partitioning a larger thing.

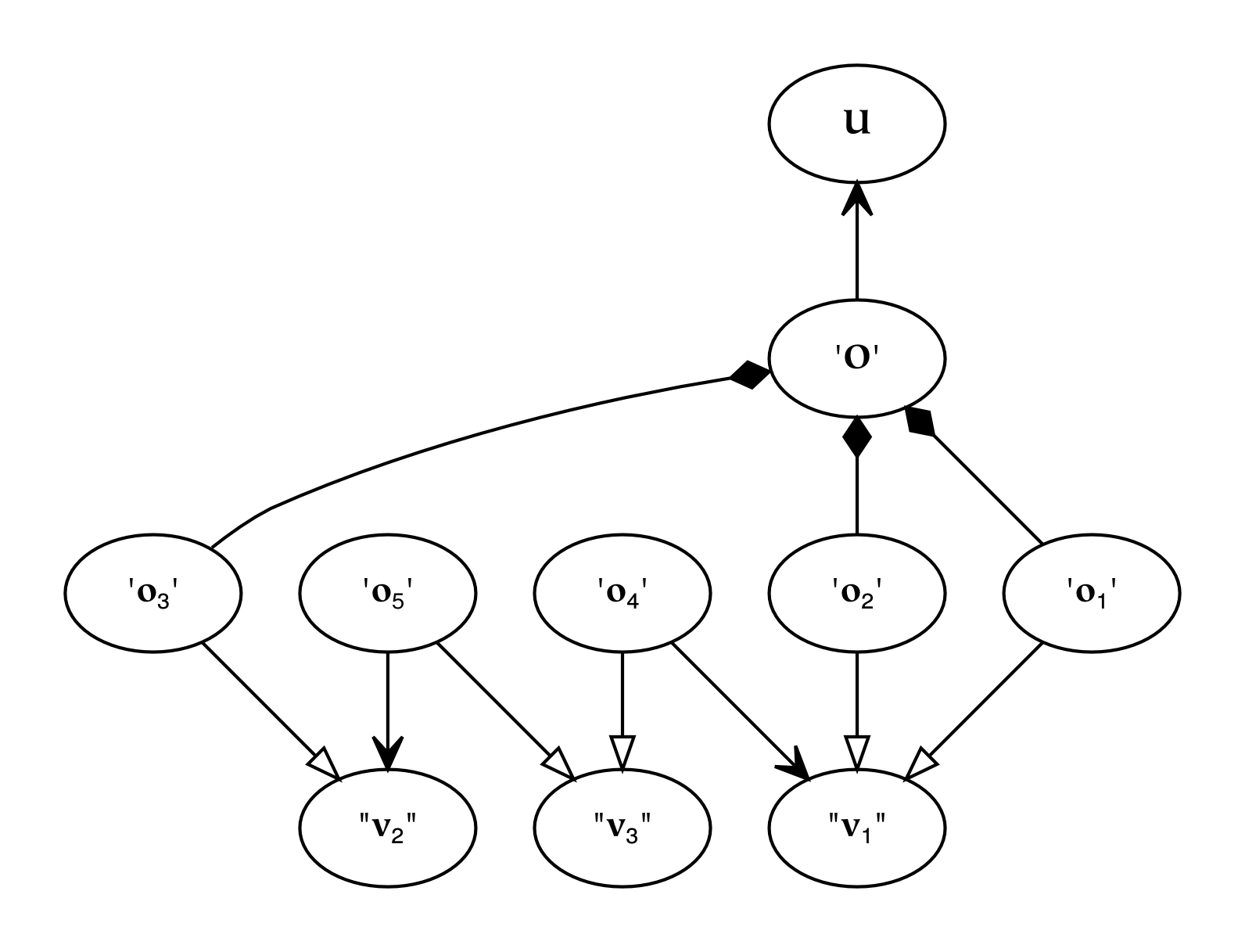

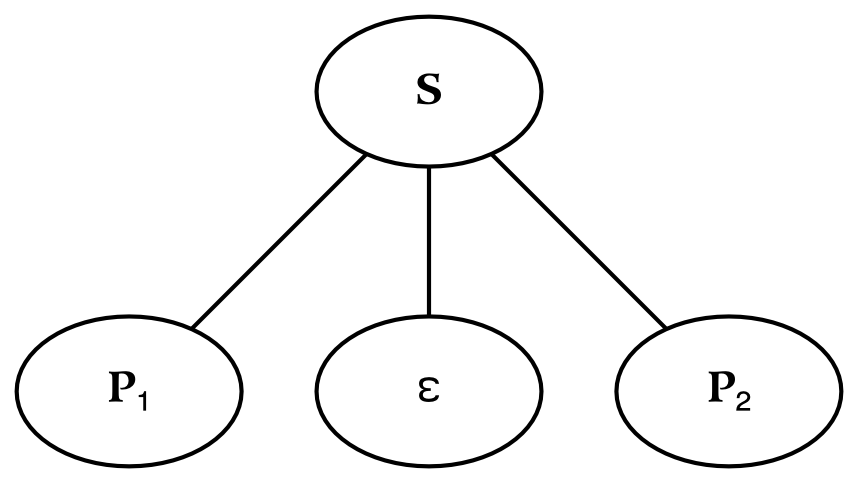

This picture depicts something . The attached diamond-headed arrow indicates parthood: something, unless it is everything, is always a part of something else (in this drawing, that larger thing has been omitted).

The partition of a thing and the parts of that thing entail one another.

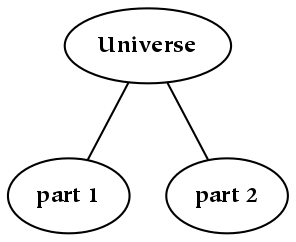

This book begins with everything, and then introduces a partition (or division). This partition entails the creation of at least two other things, each of which is a part. Parts constitute everything, are potentially collections of smaller things, and are of course things in and of themselves. The part structure of things can be represented as an upside-down tree: a single trunk at the top represents everything, or at least everything in the domain of discourse. This tree can be described using the terminology of a family tree: the parent thing gives rise to (and is depicted above) the child things, which are siblings of one another.[12]

Often, something is described in terms of its constituents, the somethings of which it is composed. For example, sets are often defined as collections of elements; cars are often described in terms of their engines, wheels, and so forth. In this book, this bias is countered by emphasizing that things are parts of a larger whole, thereby emphasizing the relationship of a part and its complement. These two descriptions are not incompatible, but they are certainly different; they emphasize different points of view. How a thing is initially defined often emphasizes what is most important about that thing, or at least what about it is most salient. It is also indicative of which concepts arose first in the conceptual universe. The first concepts are often used as the edifice of subsequent concepts, and are essential to the archeology of our conceptual landscape.

The holistic tendency to explain things in terms of their relation to everything contrasts with the reductionistic tendency to explain things in terms of their relation to their smaller constituents. The holistic point of view emphasizes that something is always a part of something larger, with only one exception: everything, which is the singular starting point for all part hierarchies. This everything cannot be explained by holistic theories, just as atoms cannot be explained by reductionistic theories. Despite this holistic emphasis adopted here, it is probably not possible to divide an undifferentiated whole into two parts if there is not some difference within its constitutive “stuff” . Therefore, the creation of something is collectivizing as well as dichotomizing.

As the epigraph of this section states, “The partition of a thing and the parts of that thing entail one another” . This implies both that a partition implies parts and that a part implies a partition. The later fact tends to be overlooked by a reductionistic description of the part: for example, we may describe some part of a thing, but neglect the effect of that description on the counterpart of the described thing. In other words, the fact that two things are created by dividing the larger whole sometimes goes unnoticed, despite the fact that it has a number of logical consequences. One way to ameliorate this issue might be to ask “which boundaries really exist?” instead of “which things really exist?” . Although it is a bit of a chicken-and-egg situation, perhaps it is useful to conceive of the division between parts coming before the identification of the parts themselves: the boundary between objects creates the objects.

The following picture illustrates, by means of a dotted line, the things which are implied whenever we talk about “something” (i.e. that something is almost always a part of something larger). This larger thing serves as a context in which something should be understood: the role played by this larger whole is analogous to the domain of discourse [ Boole ]. As it is larger than the thing under consideration, another thing (the copart of the original part) is also implied.

The creation of a partition also implies the creation of a dimension, which is simply an axis along which divisions are possible. In the simple case of a dichotomy, the dimension allows the parent thing to be divided into two children. For example, if an apple can be divided into a stem and a fruit, then this dichotomy implies a dimension along which these parts are divided (although in this case, the dimension is neither linear, nor associated with a well-known name).[13]

Clearly, some divisions have a greater pragmatic value than others; if we are hungry, it makes more sense to identify apples as opposed to red things. However, apart from this pragmatic valuation, are there any qualities of the objects to which we refer that make them more highly qualified as objects, as opposed to other possible objects? In other words, is there any reason to (necessarily) decompose the universe in one way as opposed to another? Although the answer to this question necessarily remains speculative, it seems that everything has the capacity to be divided in numerous different ways (at least conceptually). The basis of this partitioning is a central topic of this book.

To summarize, here is a brief list of the characteristics of somethings (parts) that will be investigated in further detail:

-

Parts are created by a division of everything. The divisions are merely decision boundaries, and not necessarily physical boundaries (e.g. a perfectly smooth marble may be conceptually divided into a left and a right half). A collection of one or more boundaries defines a dimension.

-

The creation of a part is also the creation of a partition; one of the things created by the partition is the part in question, which is often associated with a label.[14]

-

Necessarily, dimensionless entities cannot have parts. Similarly, entities with an associated proper (or nontrivial) dimension necessarily have parts.

-

Partitions can be repeatedly applied to parts, so that hierarchies of parts are formed (under the assumption of continuity).

-

There are many ways to partition something. Hence, a given something may be identified as a part within a larger context, or it may be identified in virtue of the parts that it contains.

-

If everything can be divided in arbitrary ways (using various non-unique partitions), it merits investigation why we divide it exactly as we do.

-

In addition to being split, parts can also be combined to form new entities. Hence, although parts are the result of partitions, their subsequent recombination allows for the creation of discontiguous entities.[15]

-

The partition occurs before the part. As a result, parts and their counterparts have an equal (ontological) footing (even if only one of them is named).

The smallest thing has no parts.

The word “atom” , as defined by the early Greek and Indian philosophers who invented the term, means literally uncuttable or indivisible. The atom is therefore the smallest thing, since it has no parts. For a universe to be atomic (i.e. to have parts which are atomic) means that the process of creating parts cannot occur indefinitely: there are small things which cannot be subdivided.

In the physical universe, the name “atom” now represents a particular kind of particle. Unfortunately, that particle was subsequently found to contain parts (atoms have electrons, neutrons, and protons as parts). Oddly enough, the name stuck to the particle, despite the fact that the particle ceased to earn its name. The quest to find increasingly small particles has continued, and today it remains an open question whether the physical universe is atomic or not.

In addition to physical atoms, we may wish to consider perceptual atoms . In the perceptual universe, the smallest difference in perception is referred to in psychology as the “just noticeable difference” . From a physical point of view, the just noticeable difference may be bounded below by the firing of a single neuron (although there are also numerous chemical changes that are associated with this event). In other words, if we assume that our perception is mediated by the electrical interaction of the neurons in our brains, then the smallest unit of information which we are able to perceive is the firing of a neuron. From a perceptual point of view, however, this neuronal firing is the smallest observable change, so it might be a candidate for the title of “perceptual atom” . However, this perceptual atom is so small relative to perception as a whole that perception, even if it is not continuous, is a discrete approximation of continuity.

The determination of whether or not concepts are atomic is complicated because the scientific study of concepts and the conceptual universe is contentious: verbal report of mental states is notoriously unreliable. Hence, in order to measure concepts, we will measure their near analogues, symbols (not the perception of them, but the conception of them). The smallest units of symbolic meaning are morphemes, which in many cases correspond to words (or more technically, lexemes). Morphemes are atomic in that they are not composed of smaller meaningful parts, as with other parts of speech. Although the representation of a concept is not atomic (it consists of letters or sounds and has a distributed representation in the brain), and the object referenced by a concept may or may not be atomic, is a central thesis of this book that concepts are atomic.

Something cannot have a dimensionality less than its parent thing; it occupies a nonzero interval on every dimension which the parent occupies.

Can something have a dimensionality less than the whole of which it is a part? Although one could imagine something that occupied an arbitrarily small extent along one of the dimensions of the parent thing, to posit that something has no extent along one of its dimensions leads to a large number of Zeno-like paradoxes.

One of the older of a number of conundrums related to this topic asks how many points exist on a line (where points are assumed to be zero-dimensional things and lines are assumed to be one-dimensional things). Although one branch of modern mathematics provides a ready answer, it is debatable whether this answer is truly substantive. In particular, although we have named the answer, it may not be that we have actually defined the answer in meaningful terms. The name given to the answer, an “uncountable infinity of points” , is not a number like other numbers. For example, it does not grow when other numbers are added to it. In some sense, then, it is not a number at all, at least in the sense of the original question.

A similar problem is posed by understanding space as composed of zero-dimensional points. Maintaining that volumes are composed of points corresponds to the mathematical notion of point sets. Point sets assume that things are composed of points, or atoms which are of a lower dimensionality than the larger whole which they occupy. Unfortunately, this understanding forces points that lie on the boundary between one object and another to be associated with either one object or the other. This poses problems because the boundaries of objects (and hence the objects themselves) become characteristically different: the object possessing this boundary is said to be “closed” , and the object lacking this boundary is said to be “open” . One of the many problems which result from this view is that two closed objects cannot touch each other, since between any two points are an infinite number of other points.[16]

This does not mean that all talk of points, lines, and other such objects is discounted, but it does mean that none of their dimensions will be allowed to have an extent of zero. In other words, points are taken to correspond to atoms whose extent is (only) arbitrarily small: perhaps infinitesimal, but still nonzero. Boundaries, on the other hand, are free to have a lower dimensionality, since they do not exist as parts in the space that they divide.

The properties of something may be extrinsic or intrinsic. All objects have extrinsic properties except everything, and all objects have intrinsic properties except atoms.

There are several different ways to say what an apple is, or to give a description of it. One alternative is to define it functionally: it is something to eat, or something that grows on trees. These are extrinsic properties of an apple, because they depend on the apple's relationship with other things. The apple can also be defined intrinsically, by defining some characteristic property of apple-matter. That property is attributed to the apple itself; it is relatively independent of the apple's relationship with other things.

The distinction between intrinsic and extrinsic properties is important to keep in mind when describing the conditions for identity between two objects. Twins, for example, may be intrinsically identical if they have the same physical appearance and parts (under the assumption that different material of the same type is identical). They are not extrinsically identical, however: at the very least, their spatial positions are distinguishable.

A close approximation of this distinction between intrinsic/extrinsic properties is the distinction between interior/exterior properties.[17] For example, a property of apples such as “being eaten by people” is an extrinsic property. Properties such as “being eaten by worms” are also probably extrinsic properties, even if the worm is inside of the apple (which makes it clear that the matter is not always clear-cut). One might argue that the boundaries of the apple do not include the worm; in any case, the essential piece of information that makes a property extrinsic is its dependence on some other thing.

Given the relatively holistic presentation in this book, extrinsic properties are emphasized. A thing has extrinsic properties in virtue of its relation to other things. The intrinsic properties of a thing, therefore, can be defined as the extrinsic properties of that thing's parts. Accordingly, it is clear that a thing which is not a part of something else does not have extrinsic properties, and a thing with no parts does not have intrinsic properties. The two things satisfying these criteria are known as the universe and the atom, respectively. In terms of location (instead of properties), since there are no objects outside of the universe, there are no objects in terms of which to define it: hence, it cannot have an extrinsic definition. At the other end of the continuum of size is the atom: a thing with no parts cannot have an extrinsic definition between its parts, therefore it does not have an intrinsic definition.[18]

Intrinsic properties characterize the parts of a thing.

Intrinsic properties describe or define an object in terms of its parts. This method of definition is exploited by reductionism. For example, to understand the behavior of an individual reduces to the sciences of physiology and psychology (which describe the activities of the brain). Psychological understanding reduces to the science of biology, which studies the activities of the neurons that constitute the brain. Biology, in turn, reduces to chemistry or physics, which studies the molecules that make up the neurons.

Ultimately, this reduction results in a very detailed explanation, but not necessarily an increase of explanatory power. The big is not caused by the small, just as the parts are not caused by the whole. They are simply different levels of description, both of which are a valid description of reality (albeit descriptions of reality that deal with differently sized or shaped parts). While a description that uses small parts may be more detailed, it is also more complicated. So, while it is possible to describe a person by using a physical description that corresponds to the movement of their molecular parts, it is not necessarily of great benefit. In fact, the description of an individual in terms of various neurotransmitters might be substantially less useful than a physiological description, since we know how to affect physiological change more easily (which of course has an effect on chemicals in the brain).

Extrinsic properties characterize the whole of which a thing is a part.

Extrinsic properties can be investigated in the linguistic domain by examining the meanings of words (or more specifically, morphemes, lexemes, and phrases). The symbolic equivalent of the extrinsic definition of an object is the definition of one symbol (or phrase) using other symbols. The intrinsic description of the corresponding concept would be an analysis of its constituent words or morphemes. Both of these definitions occur in most dictionaries, which provide both the etymology of a word and the definition of that word using other words.

An interesting test for the extrinsic identity of symbols is known as linguistic substitutability. If two different words or phrases are able to be used in the same context (i.e. the same position in a given sentence), then they are linguistically substitutable, which most often implies that they are of the same type, or that they can play the same role. For example, ball and boy are substitutable in the following sentences (in that they do not change the meaning of the larger context), but bucket is not:

-

Kick the ball.

-

Kick the boy.

-

Kick the bucket. ( understood as a synonym for "to die" )

The last example is interesting because it demonstrates that “bucket” in this context is not a semantically complete thing. The idiomatic expression “kick the bucket” means to die, which is not a compound that involves the meaning of the word “bucket” . The semantics of “bucket” is irrelevant in this context: the word “bucket” acts like a phoneme instead of a morpheme.

The context of a word can determine one of several definitions of that word. More precisely, the single word is called a homonym , and the multiple words (i.e. those with different meanings) are called lexemes . To illustrate this, the preceding examples can be altered as follows:

-

We had a ball.

-

We had a boy.

-

We had a bucket.

“Having a ball” might connote either having a fun time or having a round toy in this context, which illustrates that the homonym “ball” contains at least two lexemes. In the second phrase, having a boy probably connotes that we have given birth to a child, which illustrates that the verb “to have” is a homonym. Finally, the word “bucket” in this context, as opposed to its context in the previous example, is once again a complete noun, meaningful on its own (or at least meaningful to a greater degree).

There are at least two different ways to understand homonyms. Under one understanding, a single word may contain multiple definitions, each of which is complete. Under another, the single word contains an incomplete definition, which can only be completed in context. And just as the definition of a word may be intrinsic or extrinsic, there are numerous things which are similarly incomplete or ambiguous without a larger (clarifying) context.

Properties characterize the relations of a thing.

The creation of parts is a process which is necessarily relativistic: a part depends on its counterpart, or the complement of that part, for its definition (in particular, its extrinsic definition). To state the matter slightly differently, when characterizing a thing with properties or attributes, the complement of that thing is also characterized. When everything is divided into something and not -something, something has a certain characteristic property in light of which the division is possible in the first place. The not -something, on the other hand, does not have that property: further, the not-something has the not-property.

For example, consider a table. Now, imagine a part of that table: a table-top thing, which is composed primarily of the surface of the table. In virtue of (conceptually) creating this part, a complementary thing has been created: the legs of the table (i.e. the remainder after the partition). The fact that it is possible to distinguish the table top from the rest of the table implies that it has some property that the rest of the table does not: let us say that it has the property that we can put drinks on it. Because the object has this characteristic property, the complementary object has a complementary property, i.e. the legs of the table have the property that drinks cannot be placed on them. If they did not have this complementary property, then the basis for creating the dichotomy in the first place would disappear (assuming that the table or legs do not have other characteristic properties, which in reality they certainly do).

Under this analysis, the creation of an object is analogous to naming one part of a divided thing: creation is a division in addition to a collection. Every time we create something, we implicitly create at least two somethings. Neither thing is ontologically prior to the other, although we often name only the object on one side of this boundary (which side to name is most often a pragmatic decision). For example, within the context (or superset) of fruits, some subset may be designated as “apple” : there is no (simple) designation for the object which is materially constituted by “all fruits that are not apples” . So the latter object must be referred to by a complex expression, by negating that which has been named: “non-apple” . Note that this negation (or complement-formation) requires that we know the whole from which the part was created: a non-apple in the context of “all food” means something other than a non-apple in the context of “all fruits” .

It is a mistake to see non-apple things as only lacking in something: possessing the property of being “not-apple” is every bit as characteristic as the concept of apple. Of course, “being a not-apple” may be a less useful piece of information compared to “being an apple” , because it is a characteristic of a comparatively large number of things. Still, having a property has no more reality than having the opposite of that property, just as the thing apple has no more reality than the thing not-apple (note that we are not talking about the symbols apple and “not-apple” , where one is a compound word and the other is not). The creation of a decision boundary results in two things, each of which has a characteristic property. Again, which object is named or labeled, as opposed to which object is referred to through negation, is a pragmatic concern. Similarly, the difference in formulation between having a given property and having the negation of that property is a feature of references, not of the things to which those references refer.

Additionally, whether an object possesses a property or not depends on the counterpart of that object. As a concrete example of this conceptual relativism, consider whether “strong people require weak people” . In particular, imagine a woman who is strong (e.g. someone in a gym who is lifting a heavy weight). Suppose that her ability remained roughly constant, while everybody else on the planet started weight training, and became capable of lifting weights that she could not lift. If we still called her strong, there would be nobody to call weak anymore. Perhaps we would call everyone else “super-strong” , but it seems more likely that we would not call her strong; we would call her weak (even though her ability did not change).

If we do not change the label which we assign to her, the semantics of that label have to be greatly altered. Although we may continue to call her strong, she was relatively strong, and she is now relatively weak. In either case, she is not in control of being weak or strong. Calling her strong depends on other people; it is a relative judgment that depends on the whole of which she is a part. Superman is not super compared to his friends from planet Krypton; he's Regularman.

Some people might maintain that certain attributes of a thing are not relativistic in this sense, or that some attributes have semantics which do not depend on that thing's complement. One example that the scientifically-minded might raise is the mass of an object: the mass of an apple does not depend on the mass of a banana, does it? The banana is not directly used to compute the mass of the apple, but the measure of a thing is always taken with respect to something else. For example, suppose that the mass of the apple is expressed in kilograms. A kilogram is defined as the mass of a certain volume of water at sea level.[19] It is by definition relative; the primary difference between this and the previous example about a given person's strength is that in this case the comparator is a single object (a certain volume of water), whereas in the last example the comparator is a number of objects (other people). Although some choices of measurement may allow consistent application to a wider range of phenomena, there is no a priori reason to use one comparator as opposed to another.

Some people may object that the strength of a person may change, but the mass of a specific volume of water does not. To know that the mass of an amount of water does not change, however, we have to weigh it (let us suppose that it weighs one kilogram). In other words, it weighs as much as some other object that weighs one kilogram. If the mass were to change, all we know is that the mass of other objects must have changed at the same time. So we cannot conclude that the mass does not change in an absolute sense, but only that it does not change with respect to something else (unless this is how we define absolute change in the first place).

This relativistic viewpoint is closely related to a conundrum proposed by Henri Poincare: if the size of the world doubled overnight, would you notice it? If you assume that all other masses and laws of physics were adjusted as necessary, it is not possible to tell the difference (whether such an undetectable difference is in fact a difference at all is left as an exercise for the reader).

Dichotomy both collectivizes and dichotomizes, without being intrusive on the dichotomized domain.

Although the universe may be divided into things, the dividing line itself does not have any concrete existence. Neither do any number of dividing lines: the dividing line itself does not occupy the same space that the objects occupy. However, this does not entail that the dividing line is insignificant: it is essential for the formation of sets. Conceptually, there is a difference between a set of apples and a set of pairs of apples, even if these sets ultimately refer to the same apple material (i.e. if they have the same spatial extent). In this section, we explore the nature of the boundary that is created by dichotomy.

Sets are discrete: they may be divided into their members in only one way. Wholes are continuous: they may be divided into further parts in arbitrary ways.

If something is created out of everything by a process of dichotomy, then it is a part of everything, but it is not a subset of everything. Hence, there is a very distinct difference between parthood and subsethood. With respect to parts, if my hand is a part of my arm, and my arm is a part of my body, then my hand is a part of my body. With respect to subsets, however, if my hand is a subset of my arm, and my arm is a subset of my body, then it is not true that my hand is a subset of my body: my hand is a subset of a subset of my body. Expressed mathematically, the transitive property does not hold for subsets, but it does hold for (spatiotemporal) things.

Table 2.1. Set Theory and Mereology Compared

| Set Theory | Mereology |

|---|---|

| set | whole |

| subset | part |

| union | fusion |

| intersection | dichotomy (partition) |

The table above compares some of the terminology typically associated with set theory and mereology. In general, both sets and wholes are things , and both subsets and parts are somethings . All of the differences between set theory and mereology are ultimately due to the fact that in set theory, the curly braces have (ontological) significance. In other words, the curly braces cannot be taken away without consequences; they establish a significant boundary, such that the set of a thing is not equivalent to that thing. The set, therefore, is more than the sum of its parts.

Despite the ontological significance of boundaries, however, they are not of the same nature as the things that they collect; they are not parts themselves. To reiterate, sets and subsets have boundaries that are in some sense real, while wholes and parts do not. In both cases, however, the boundaries are not intrusive on the things that they contain (or divide): boundaries, even when they have some reality, are not of the same nature as things. The difference between set boundaries and mereological boundaries is also apparent when we consider collections (either unions or fusions) of subsets and parts: set boundaries are preserved, but mereological boundaries collapse (the parts fuse together, which is why a mereological union is known as a fusion). Similarly, intersection (as defined for sets) is not a valid operation on a continuum: hence, a mereological division is referred to as a dichotomy or a partition.

A universe has no boundaries

Universes do not have boundaries; they are by definition unbounded. If a universe did have a boundary, then there would be something in it which it did not contain (and therefore it would not be a universe). In this section, we briefly examine the subjective/objective boundary from the point of view of both the subjective universe and the objective universe.

From the inside of a subjective universe, there are no boundaries: you do not see what you do not see (we cannot experience the objective world in a manner other than that in which it comes to us through our subjective experience). Although you experience a limited subjective world, it is impossible to experience the edge of the subjective world. To be more precise, you can know that the subjective world has a boundary or edge, but you cannot perceive it: to perceive an edge as such entails perceiving both of its sides. For example, from the outside, you may view yourself as coextensive with your body. But from the inside, your senses extend right through this boundary; they sense as far as they can, and vision perceives a good deal further than the exterior of the body. So when viewed from the inside, there is no inherent boundary at the edge of your body: in fact, there is no boundary at all.[20]

Similarly, from the outside looking in, there are no boundaries. Psychology has been looking inward (into the brain) for a long time, expecting to find the seat of the soul, but it cannot find the boundary point at which we cross from the objective world into the subjective world.[21] This boundary seems to retreat endlessly, no matter how far into the neural pathways you look. If you look from the outside-in, it looks like sensation continues all the way through (and before you know it, you wind up in action). It may turn out to be somewhat of a doomed endeavor to try to localize a subjective experiencer in the first place, if the boundary between the experiencer and what is experienced does not exist in the way that we think it does.

To summarize a few oddities about this elusive subject: universes themselves have no boundaries, parts of universes are created by boundaries that are not really there, and sets of things are demarcated by boundaries that are there in some sense (although we have not been explicit about their nature).

True and false are the essence of categorization.

Many statements may be either true or false: these statements are traditionally called propositions. These statements may not be anything other than true or false: hence, if a statement is not true, then we may infer that the statement is false. If it is not false, then we may infer that the statement is true. In slightly more technical terms, these statements are propositional functions which yield either a true or false result. For example, either an object has the property Px, or it has the property not-Px (which we abbreviate by writing ¬Px). The fact that there is no third alternative is known in the field of logic as the Law of the Excluded Middle. This law adds power to our reasoning: it allows us to infer statements on the basis of other statements, which might not otherwise be possible. This law is central to everyday reasoning, and it is closely related to dichotomy.

The law of the excluded middle is not just about binary (true/false) logic. For example, in the field of fuzzy logic, which is an extension of binary logic, the predicates take on true and false values, as well as values in between: for example, statements may be eighty percent true. For example, we may feel that an Asian pear is only somewhat of an apple. If we feel that it is seventy-five percent apple, then the equivalent of the law of the excluded middle in the fuzzy logic context allows us to infer that the Asian pear is twenty-five percent not-apple.[22]

Despite the power of the Law of the Excluded Middle, it is not always applicable. In particular, predicates have a range of valid application, or a set of things to which they can be applied. It is only in the case that a predicate can be validly applied that it divides a set of objects into those objects which have the property and those objects which do not. For example, assume that the predicate “green” can operate effectively only on things which are capable of having a color (i.e. things that emit radiation within the visible spectrum). Particles which are invisible in this sense may not be any color, so it would be meaningless to apply the distinction “green/notgreen” to such particles. If we are determined to apply this predicate, then we are forced to say that:

-

The particle isn't green

-

The particle isn't not-green

However, the combination of both of these statements is problematic under standard logical analysis, where the first statement, “The particle isn't green” , may be transformed into “The particle is not-green” , which contradicts the second statement.

Therefore, when we invoke the law of the excluded middle, we must be sure to take into account the domain on which the predicate operates. If we wish to be able to conclude that a thing is not-green, we require the following two preconditions:

-

The thing isn't green

-

The predicate green can be applied to the thing (i.e. the thing is in the domain of the function green)

Dimensions are an extension of the concept of dichotomy.

A dichotomy is the simplest form of dimension, which is just a two-way division. More generally, a dimension can have any number of divisions. In less mathematical contexts, dimensions are also known as scales . Scales are typically divided into four types: nominal, ordinal, and interval, and ratio (here, the ratio scale is treated as merely a type of interval scale). These types might also be called unsorted, sorted, and measured dimensions.

Nominal dimensions have unordered parts.

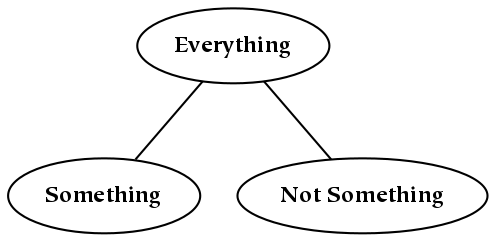

A nominal dimension is unordered in the sense that there is no basis to assign relative positions to things. In the figure below, we depict a nominal dimension by showing three things: everything, a named part ( “something” ), and the complement of that part ( “not something” ). As this is a nominal dimension, the relative left-right position of the children is not an essential characteristic.[23] For example, if “something” were to the right of “not something” in the diagram below, it would not make a significant difference:

Again, nominal dimensions may determine any number of parts. For a nominal dimension with N parts (or children), we refer to the corresponding division as an N-way division. If the parts do not overlap one another, which is the case for the diagrams in this book, this is also an N-way partition.

Ordinal dimensions are nominal dimensions that have an associated order.

In an ordinal dimension, the relative positions of the divisions have significance. In other words, if a dimension is ordinal, then it imposes an order (or at least a partial order) on the parts that it defines. As an example, finishing first, second, or third in a marathon constitutes an ordinal dimension: knowing the position does not tell you exactly what the winner's time was, it only conveys that one time was greater or less than another.

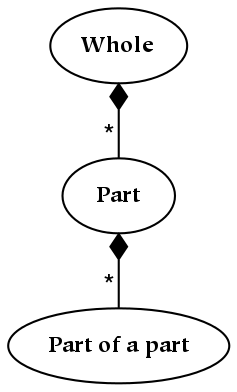

A diagram depicting an ordinal relationship is shown below, which shows a whole and two parts. We know that child things (parts) must be smaller than their parents, so we are able to determine a partial order between the nodes labeled “Whole” , “Part” and “Part of a Part” in the figure below. However, although we know that each part is smaller than its parent part, we don't necessarily know any of the sizes:

Part hierarchies, such as the one depicted above, represent ordinal dimensions because the parthood relation (the vertical dimension) imposes a partial order which the horizontal dimension does not. To reiterate, whether one sibling is to the left or right of another is not (structurally) meaningful, whereas it is meaningful to ask if one part is the parent of another.

Interval dimensions are ordinal dimensions that have an associated measure.

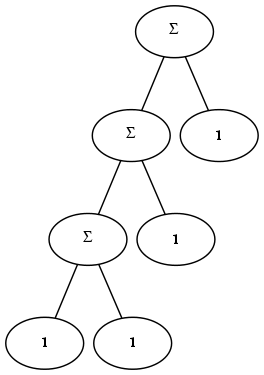

An interval dimension introduces an additional relation between parts that results in a measurable distance (metric) between the designated parts. As a numerical example, 1 is the same distance from 2 as 2 is from 3. One way of creating an interval dimension is to use the same condition for division at each level of the tree. For example, the figure below depicts a structure which is composed of exactly two types of things, a unit element and a sum:

Trees which have a fixed metric structure can be described with very few parameters. For example, because every numerical node in the tree above is identical, one can compute the number corresponding to any node in the tree. In the general case, interval dimensions are more flexible than this example illustrates: for example, they need not be linear (e.g. a logarithmic scale could be established by using multiplication instead of addition).

A hierarchy is a structure corresponding to successive partitions of a thing.

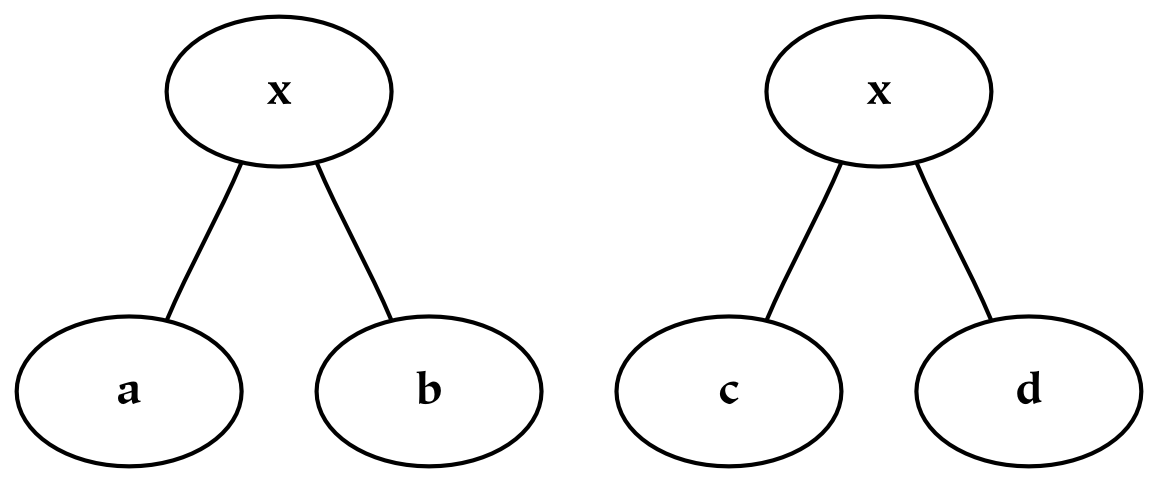

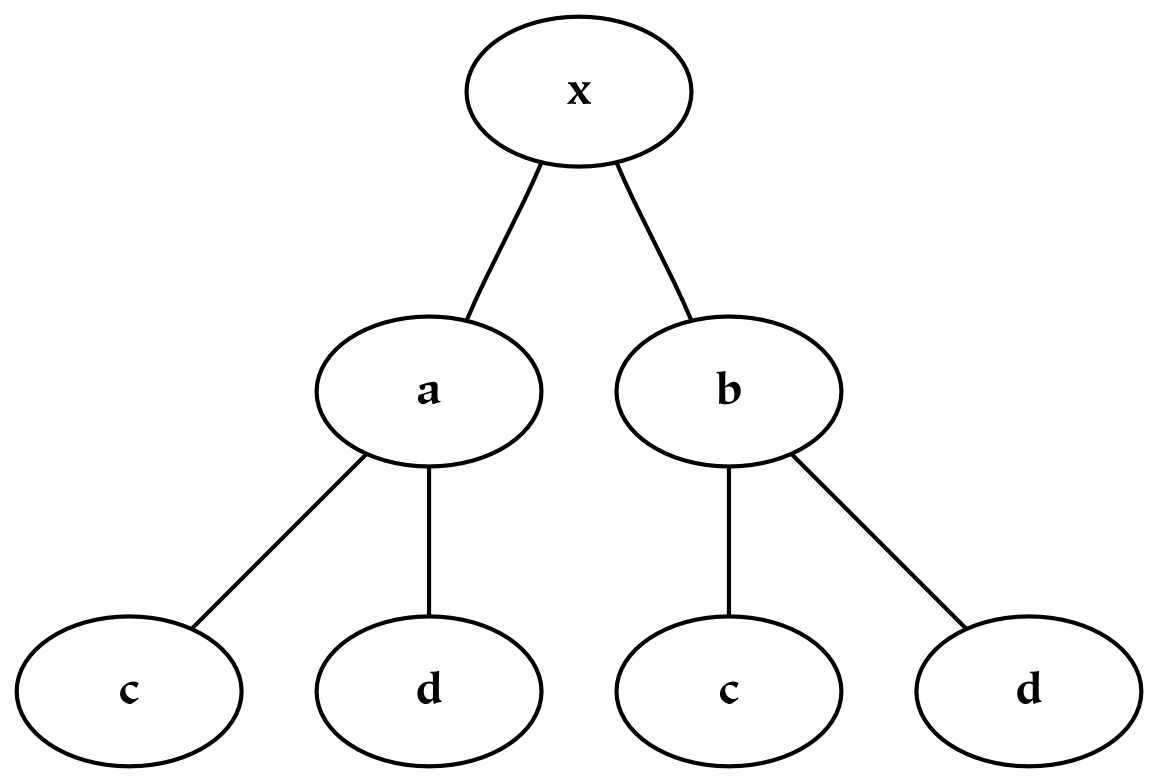

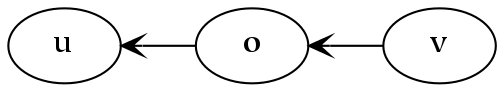

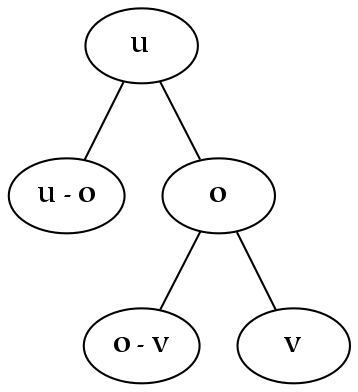

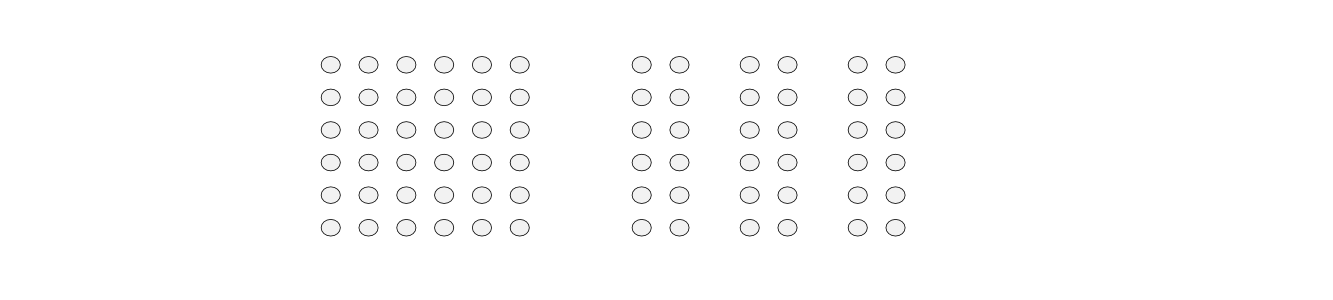

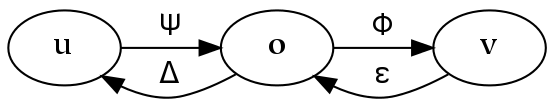

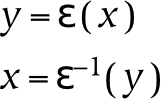

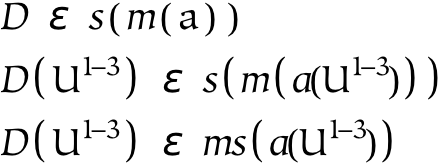

Hierarchies are collections of dimensions (and simultaneously collections of things). They are tree-like structures, or rather root-like structures, since they branch downwards instead of upwards. Hierarchies often represent divisions of a larger whole, where that whole may be a physical, perceptual, or conceptual thing. Graphically, hierarchies can be produced by grafting trees together in a regular way, or appending the branches of one tree to each of the terminal nodes of the other. For example, consider an object, x , which has been divided using two separate dichotomies as follows:

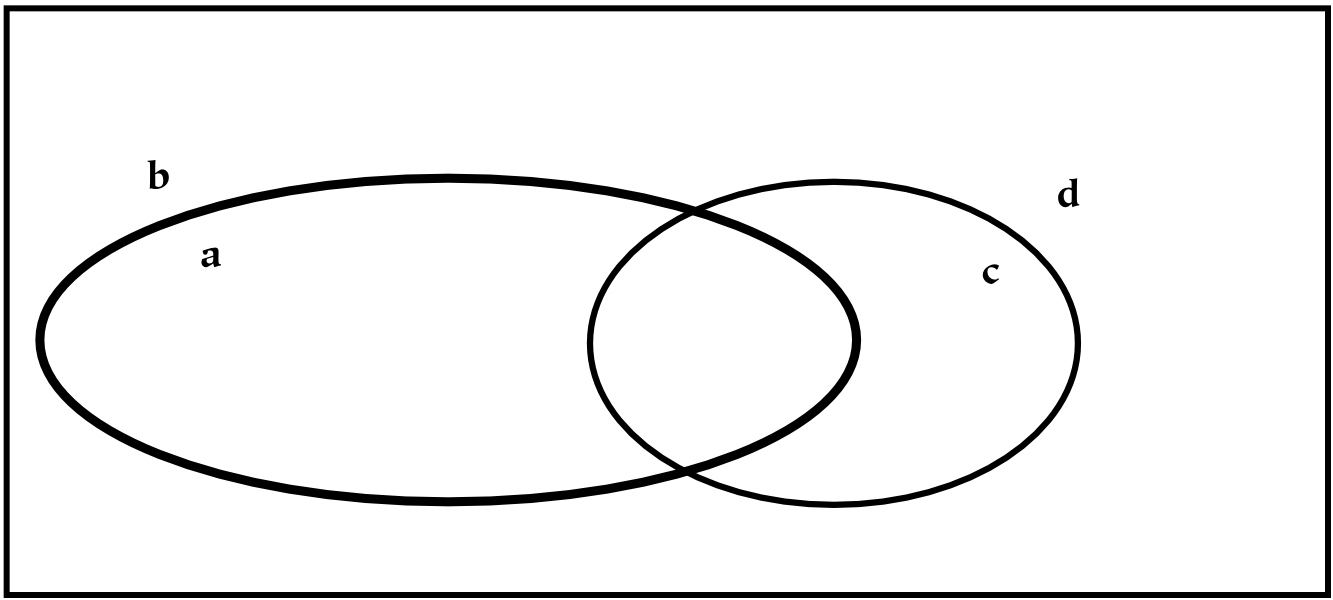

If we wish to combine these two hierarchies, then we may do one of two things. The first possibility is to create a single tree, x , with four children corresponding to (ac, ad, bc, bd). However, this does not preserve the notion of priority: if the division into a/b happens before the division into c/d, this information is lost (or at least, it is no longer preserved graphically).[24] If preserving this information about priority is not required, this tree can also be represented with a Venn diagram, which is essentially a flattened tree diagram. A Venn diagram is shown below, where it has been assumed (graphically) that none of the intersections are empty. The labeling of this diagram is not standard, since here the boundaries are labeled (a/b and c/d). The convention for Venn diagrams is to label the parts, which would yield the parts ac, ad, bc, and bd.

A second possibility for combining the two dimensions is to append the branches of one tree to each of the terminal nodes of the other tree, which in this case results in three layers of nodes. Each path from the root to a terminal node in this tree can be likened to a mathematical cross-product, where pairs are formed by taking one element from each dimension. This sort of combination increases the depth of the hierarchy, and this additional structure allows one to encode additional information: the divisions closer to the root of the tree are prior to those further down.

In some cases, certain of the terminal nodes of the tree will be empty: in those cases, the dimensions were not (entirely) orthogonal. This implies that to some extent, the dimensions encoded the same information. For example, this can happen with the two dichotomies cats/non-cats and animals/non-animals, since there are no cats which are non-animals.[25]

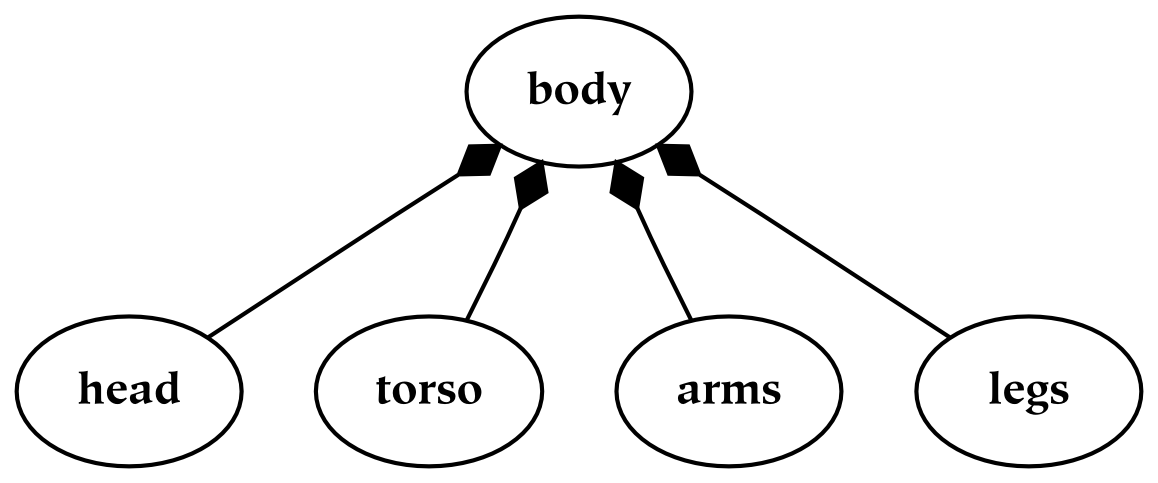

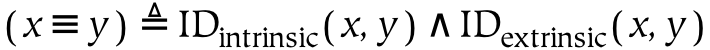

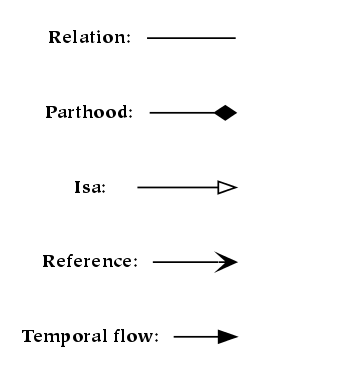

There are two types of common hierarchies which should be carefully distinguished. The first type of hierarchy is known as a meronomy, in which the children are parts of the parent. In the figure below, a meronomy is depicted in which the whole (the entity at the top of the diagram) is a human body, and the parts are the things that compose or constitute the body. This parthood relationship is denoted with shaded diamond arrowheads:

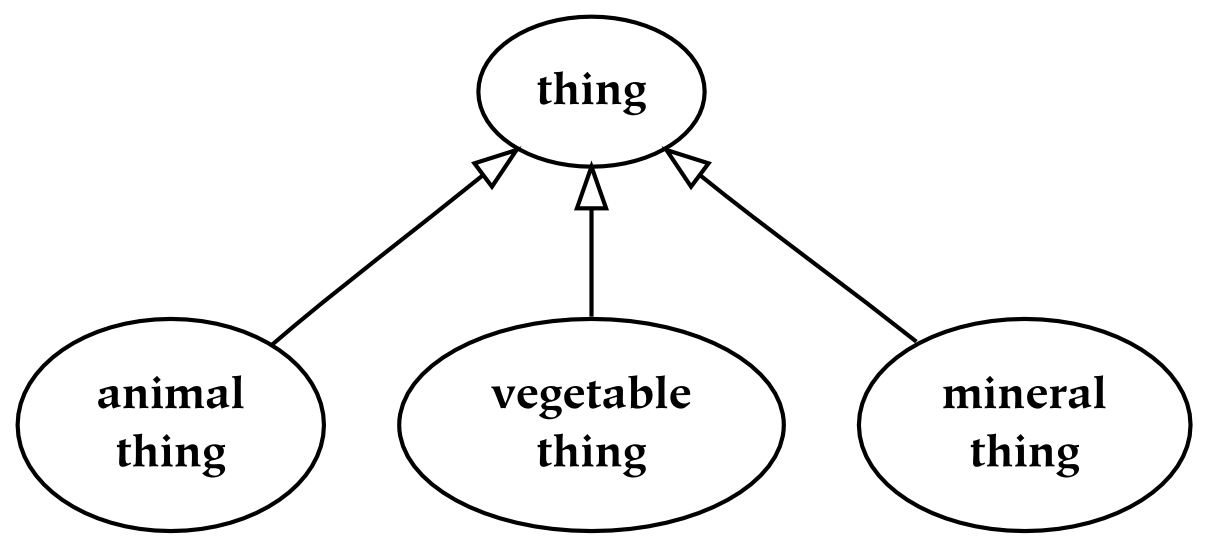

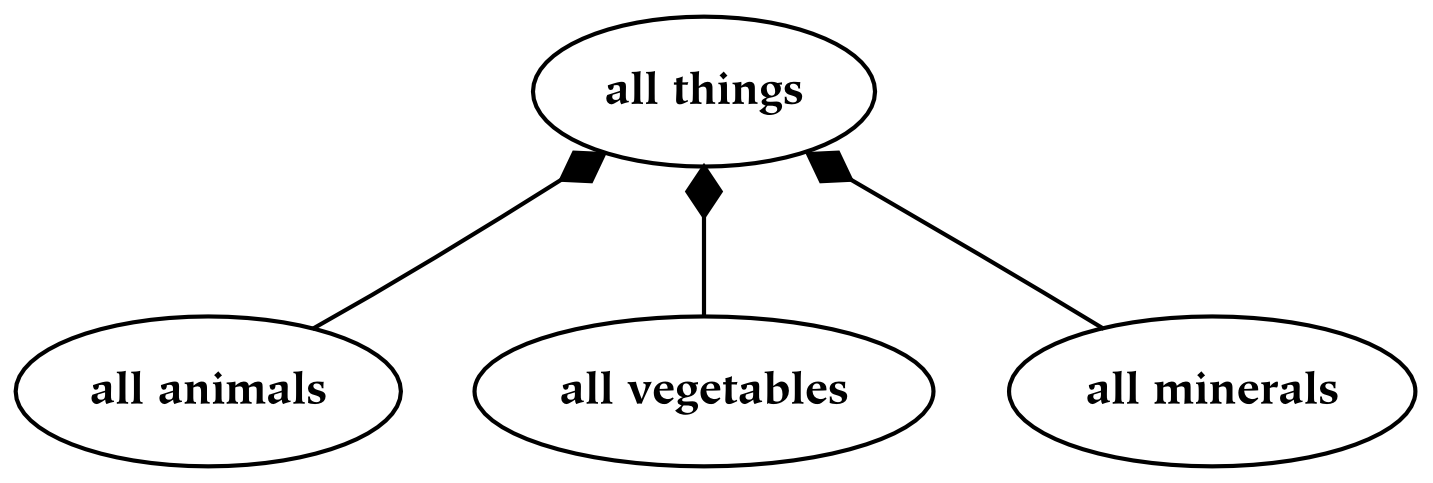

The second type of hierarchy is known as a taxonomy, in which the child things are kinds of the parent things. A taxonomy is similar to a meronomy in that the collection of all the kinds of a thing is a part of the collection of all things; it is different in the way that parts are formed. Perhaps the most important difference is that a taxonomy is typically composed of abstract entities : it is composed of types of things, instead of things themselves. In the following taxonomy, the abstract type “thing” is depicted at the top; it is divided into three types: animal things, vegetable things, and mineral things. To denote this is-a relationship, as opposed to the has-a relationship of meronomies, empty triangle arrowheads are used:

As an example of the difference between meronomies and taxonomies, an animal is-a thing, but a head is-a-part-of-a body (or a body has-a head). However, the collection of all heads is a part of the collection of all bodies, and the collection of all animals is a part of the collection of all things. In both cases, if the hierarchy partitions its whole, then the combination of all the children occupies a space identical with that occupied by the parent.

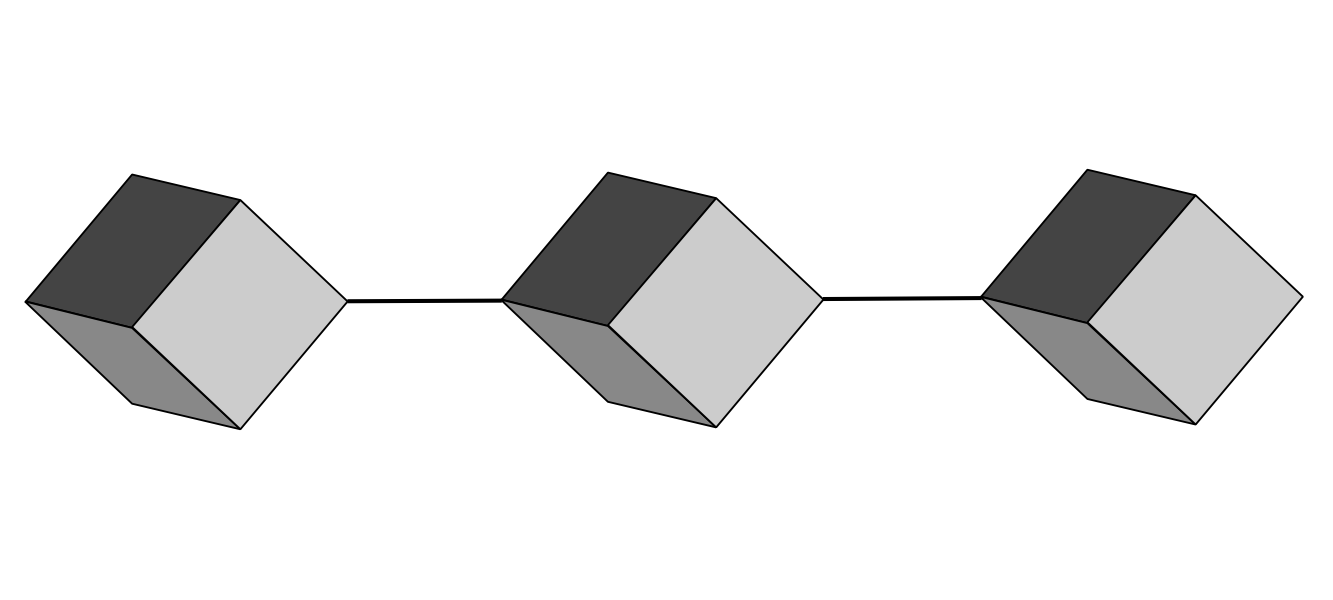

In the following diagram, we represent the extension of the previous (abstract) taxonomy: in other words, the types of the previous taxonomy are represented in this diagram as a set of tokens. An essential difference between this hierarchy and the previous one is that this meronomy consists of nodes which are discontiguous (and plural), while the previous taxonomy consists of nodes which are abstract (and singular). For example, the matter corresponding to “all animals” is distributed in space, as opposed to “animal thing” , which is an abstract type as opposed to a physical entity.

Discontiguous things are often seen to be less real , in some sense, then their connected counterparts: hence, nodes in a meronomy typically represent contiguous quantities. Hence, the previous diagram is often represented with many contiguous nodes, such that all particular animal, vegetable, and mineral things are listed.[26]

As concepts occupy positions in ontological hierarchies with a single root, the notion of ontological priority is introduced.

To refer to the fact that one concept is above another in a hierarchy, we say that it is ontologically prior to the concept which is lower in the hierarchy (the diagrams in this book follow the convention that knowledge starts at the top). Hence, to understand the origins of knowledge, we should understand which categories are primary, and especially which categories are necessarily primary.

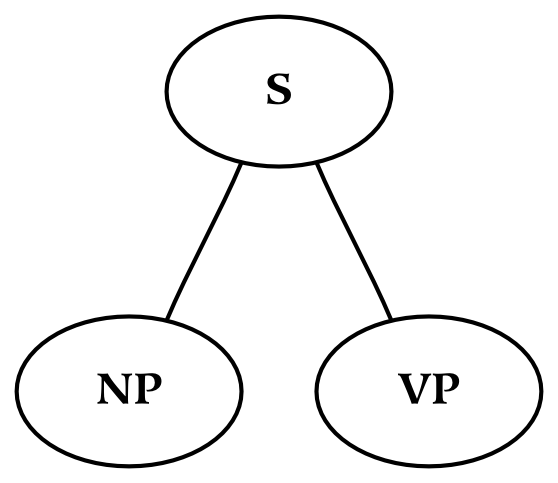

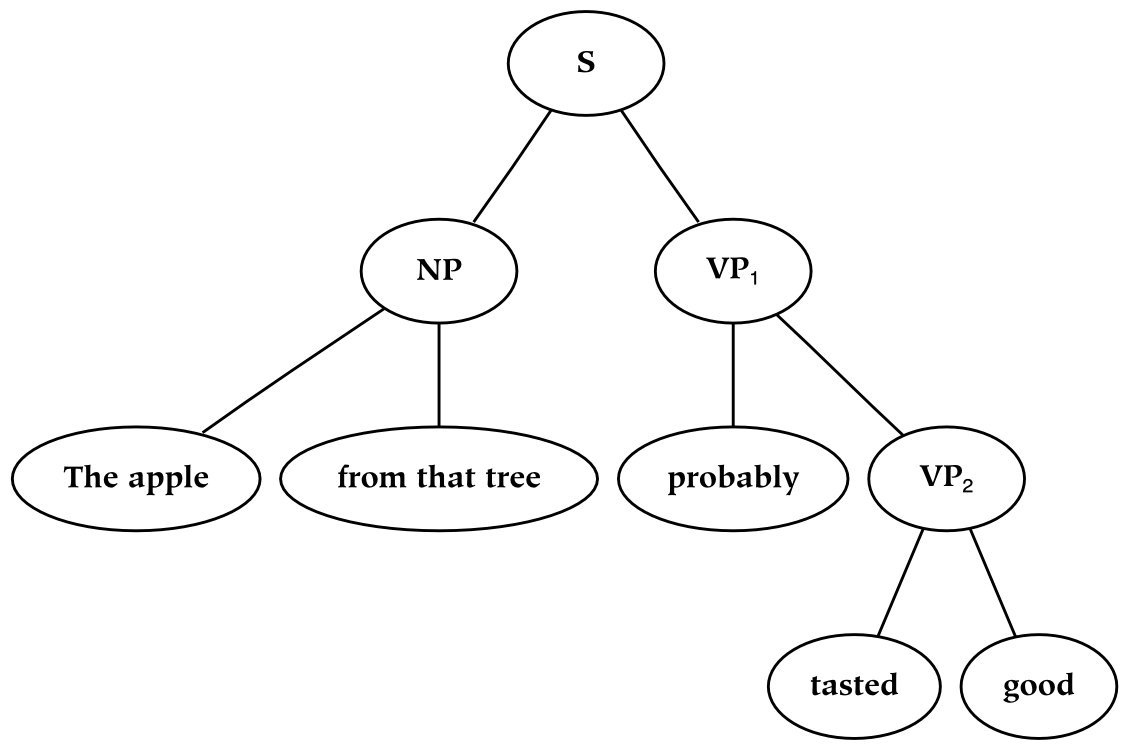

The notion that a hierarchy underlies concepts is an extension of what Noam Chomsky called the deep structure of a sentence (the deep structure of a sentence is similar to the tree diagram of a sentence). This deep structure is present in our language, but it is not immediately identifiable (the part of the sentence that is immediately accessible to our perception is known as its surface structure ). The hierarchy in this book is an extension of deep structure: for example, even nouns have a hierarchy associated with them.

The proximity of nodes to the root node in a deep structure is significant: humans learn hierarchies over time, and basic ontological categorization must happen before finer categorical detail can be achieved. With respect to the syntax of a sentence, the primary division (that occurs at the root of the tree) corresponds to the distinction between the noun phrase and the verb phrase. With respect to our vocabularies, the words that we learn first tend to occur at the top: words which are defined in terms of other words often occur further down in the hierarchy (i.e. as compared to the words which are used to define them). Of particular interest are the types of words or phrases which necessarily occur at different ontological levels, as opposed to those that just happen to be learned before others by a given individual. For example, perhaps proper nouns must be learned before count nouns, and will therefore necessarily occur at an earlier ontological level.

Although structure and history are to some degree inexorably intertwined, ontological priority is more about structure than it is time of introduction. For example, suppose someone learned the concept “apple” in terms of the concept “fruit” . In this scenario, “fruit” is learned before “apple” , so for them “fruit” is ontologically prior to “apple” . However, if that person has subsequent direct experience with apples, it is no longer the case that “fruit” is necessarily ontologically prior to “apple” (although it remains the first concept to be learned).

The number of dimensions of a thing is conceptually increased by iterating something along a singleton dimension.

Parts cannot have a dimensionality which is different than the space that contains them. Therefore, increasing the dimensionality of an existing universe by collecting a large number of objects (or reducing the dimensionality by slicing a thing along one of its existing dimensions) is not strictly possible.

However, references to things, understood from within the referential domain (i.e. understood as references instead of as parts ), may have a dimensionality which is different than the dimensionality of the things to which they refer. Further, the references themselves may be collected in a referential space. The effect of doing so is to increase the dimensionality of the things, by abstracting over them.

We will return to the topic of references in future sections, since they have not yet been formally introduced. For now, the increase in dimensionality will be depicted with atoms. Atoms should be conceived of as having a atomic extent in a large number of dimensions (as opposed to a zero size, which is the case for mathematical points). This extent is similar to how one might conceive of a piece of paper: although we may treat it as a two-dimensional object in a large number of contexts, it is actually of a higher dimensionality (otherwise adding pages to a book would not add thickness).

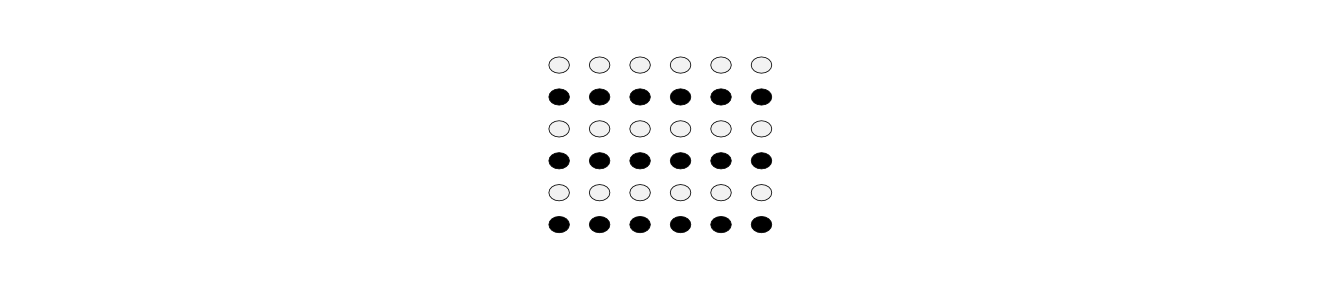

In the figures below, we show the process of adding dimensions to an atomic entity (i.e. one which has no parts). In the first figure, there is simply an atom: it has no extent which can be further subdivided along any dimension. To turn this description around: since it is not possible to sub-divide an atom, an atom does not have any dimensionality.

In Figure 2.11, “A Line: One Dimension”, we iterate the object shown in the first figure, in a direction which is orthogonal to any non-atomic extents already present (which is easy to do for an atom, since it cannot have orientation). In this way, we end up with a line:

This process is repeated to create the second and third dimensions, corresponding to Figure 2.12, “A Plane: Two Dimensions” and Figure 2.13, “A Space: Three Dimensions”. In the first case, many lines, iterated over a new dimension, can be represented as a plane. In the second case, many planes, indexed by a new (orthogonal) dimension, form a three-dimensional space (which may be envisioned as a cube).

The fourth dimension is time. In other words, time may be conceived of as simply another (spatial) dimension. Of course, we seem to move in it in only one direction, so our behavior with respect to it is different. We certainly perceive it differently (if in fact it is perceived, as opposed to being conceived), and we treat it very differently linguistically, but there is no reason to believe that it is of an altogether different nature than the first three spatial dimensions. In fact, it is treated almost identically in modern physical equations dealing with spacetime. In any case, as with the previous dimensions, this novel dimension is introduced by iterating a lower-dimensional object along a new axis, which is orthogonal to those which exist so far.

There are some objects, such as the Necker Cube, which have four spatial dimensions, none of which is a temporal dimension. Such objects are quite difficult to visualize, precisely because of interposing another spatial dimension between the typical three spatial dimensions and the temporal dimension. Perhaps the use of a fourth spatial dimension (instead of the temporal dimension) is desirable because the temporal dimension is perceived in a radically different way than the spatial dimensions. One motivation for not acknowledging time as the fourth (spatial) dimension could be due to the constraints or tendencies of language (and thought). In particular, perhaps there are syntactic constraints that encourage us to extend the dimensionality of noun phrases, rather than add dimensionality to the verb phrase (the analogy between nouns/verbs and space/time is explored in greater detail later in the book).